smileyworld

Member-

Posts

69 -

Joined

-

Last visited

-

Days Won

1

smileyworld's Achievements

Regular Member (3/7)

14

Reputation

-

I found a software, which checks most of my requirements -> wisemapping If you want to try it out in docker you can have a look here

- 2 replies

-

- software

- self hosted

-

(and 1 more)

Tagged with:

-

I am looking for a self hosted mind map tool. I don't mind if it runs in DSM as package or in docker as container, but it should have similar features like coggle. Does anyone have recommendations?

- 2 replies

-

- software

- self hosted

-

(and 1 more)

Tagged with:

-

- Outcome of the update: SUCCESSFUL - DSM version prior update: DSM 7.1.1-42962 - Loader version and model: PeterSuh's tcrp v0.9.4.3-2 JOT DS3622xs+ - Using custom extra.lzma: NO - Installation type: Baremetal - Additional comments: + Unplugged the X540-T2 NIC before upgrading + built new loader + upgrade existing DSM via Synology Assistant

-

@AbuMoosa it also works if the host is xpenology and the recording server is virtualized DSM. @Yuriy Pelekh depends where you add your camera. If you add all cameras to NAS1, then all resources (CPU/RAM/STORAGE) will be used from NAS1. However if you add the cameras not only to NAS1, then the resources will be shared.

-

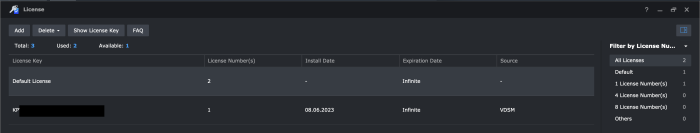

Habe es gerade getestet und es ist möglich mit nur einem Xpenology System auszukommen. Habe am Host (Xpenology 7.2-64561) 2 Gratislizenzen + 1 Lizenz aus VDSM (7.2-64570) die mittels CMS geteilt wird. Die VDSM Lizenz ist gekauft und musste in VDSM aktiviert werden, weil man die Lizenz am Xpenology Host nicht aktivieren kann. Man könnte aber auch gleich einfach einen anderen Loader, der mehr Gratislizenzen bietet, verwenden -> DVA1622 CPU mit 4 physischen Kernen / 4 virtuellen Kernen DVA3221 CPU mit 4 physischen Kernen / 4 virtuellen Kernen Quellen: CPU physische / virtuelle Kerne Tutorial für Lizenzen zwischen Synology / Xpenology / VDSM teilen

-

Docker do I really need it and what's next ?

smileyworld replied to Onknight's topic in Third Party Packages

I just stumbled across this categorized list for Docker containers. If anyone is interested, take a look -> https://mariushosting.com/docker/ -

Hey argate7, I personally would try it with this guide: https://www.digitalocean.com/community/tutorials/how-to-install-and-set-up-laravel-with-docker-compose-on-ubuntu-22-04

-

Virtualbox.spk cant be installed in DSM 7

smileyworld replied to smileyworld's question in General Questions

Found the answer on synology's website for my original problem. You need the pro version for nested VMs. If anyone needs it, here is the documentation.- 3 replies

-

- virtualbox

- dsm 7

-

(and 2 more)

Tagged with:

-

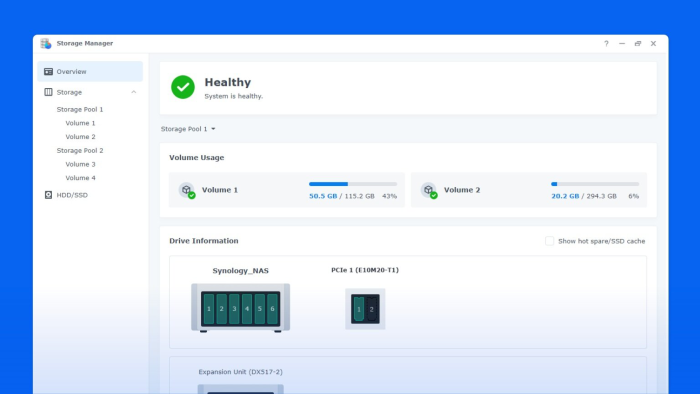

Is it possible to display expansion units (Synology DX1222) or pci-cards (intel X540-t2) in DSM7-TCRP like this? I am asking because it would be nice if I could see all my (22) drives and for example instantly know which drive I have to replace. For now it looks like this. My guess is, DSM only displays 12 drives in use because the DS3622xs+ has only 12 slots.

-

Help needed - Expand Xpenology (DSM7) beyond x drives

smileyworld replied to smileyworld's topic in The Noob Lounge

I can finally report that I fixed it. What I did to resolve my issue tried to determine if it is a hardware/software failure connected 8 disks to 1 HBA (HBA2 was not installed at that time) and checked if every disk was listed (computer management in windows server 2016, another OS would probably work as well) unplugged HBA1 and connected HBA2 -> also checked if 8 disks were listed -> one disk was missing, so I checked all connectors and one was loose. flashed firmware of my second HBA. connected both HBAs, installed 22 disks and all were listed in WIN server 2016 unplugged my X540-T2 10Gbit NIC installed Xpenology as follows ran command by command ./rploader.sh clean ./rploader.sh update ./rploader.sh fullupgrade ./rploader.sh identifyusb ./rploader.sh serialgen DS3622xs+ vi user_config.json adapted my user_config.json to my environment { "extra_cmdline": { "pid": "0x1000", "vid": "0x090c", "sn": "xxxxxxxxxxxxx", "mac1": "xxxxxxxxxxxxx", "mac2": "xxxxxxxxxxxxx", "mac3": "xxxxxxxxxxxxx", "SataPortMap": "", "DiskIdxMap": "" }, "synoinfo": { "esataportcfg" : "0x00", "usbportcfg" : "0xc00000", "internalportcfg" : "0x3fffff", "maxdisks": "22", "support_bde_internal_10g": "no", "support_disk_compatibility": "no", "support_memory_compatibility": "no" }, "ramdisk_copy": {} ran these commands to finish the tcrp loader ./rploader.sh build broadwellnk-7.1.0-42661 ./rploader.sh backup ./rploader.sh backuploader exitcheck.sh reboot plugged my x540 in connected my LAN cable to one of the LAN ports booted from USB and installed the appropriate DSM_DS3622xs+_42661.pat Special thanks to @Peter Suh @IG-88 @flyride for your support. -

Help needed - Expand Xpenology (DSM7) beyond x drives

smileyworld replied to smileyworld's topic in The Noob Lounge

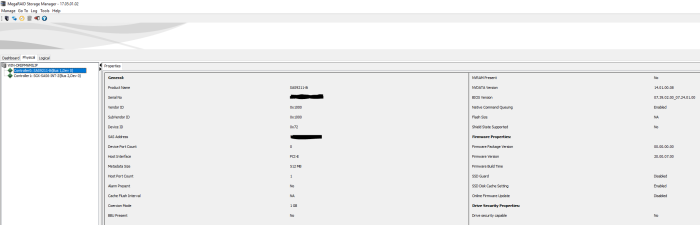

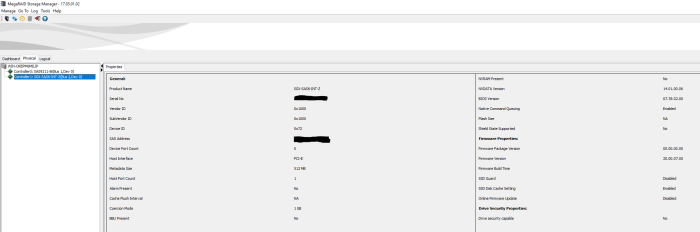

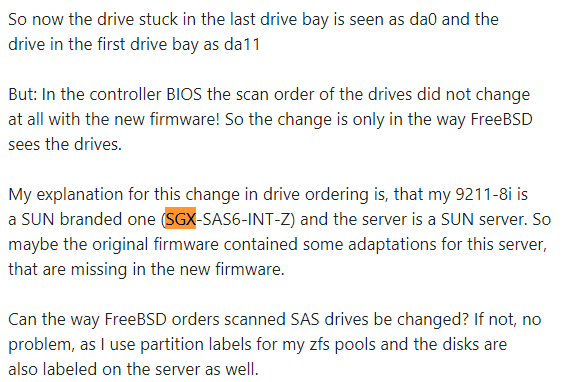

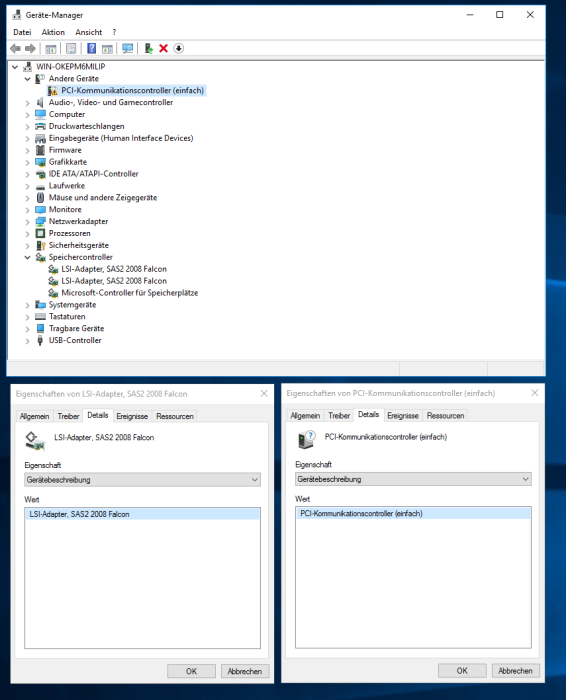

@Peter Suh Yes that's true. It looks like it's a firmware issue after all. I connected my NAS to a display and was wondering why only one of the HBAs was displaying in BIOS (ctrl +c). Now 2 hours later I learned that I have 2 different controller cards even though they were sold both as LSI 9211-8i (IT-mode). It seems there was so much rebranding and the companies used same or similar chips (sas2008) but different BIOSes. At least someone mentioned this here -------------------------------------------------------------------------------------------- -------------------------------------------------------------------------------------------- MegaRAID is however able to see both HBAs and collects the following data from both cards. I thought it would make troubleshooting easier when I simplify things (install same Firmware/BIOS) but oh boy it keeps getting more and more complex😅 HBA SGX-SAS6-INT-Z has BIOS version 07.39.02.00_07.24.01.00 is working but I cannot enter the BIOS at all It wont let me "update" the BIOS with 07.39.02.00 probably because downgrading firmware is not allowed windows server 2016 cant find a suitable driver for this card HBA SAS9211-8i has BIOS version 07.39.02.00 has a working BIOS which I can enter with Ctrl + C. windows server 2016 installs the best driver for this card on its own. So at this point I do not know where to find either a firmware newer than 07.39.02.00_07.24.01.00 with a working BIOS or a way to downgrade the SGX-SAS6-INT-Z HBA to 07.39.02.00. Furthermore I am not sure if this would fix my/our problem. Do both of your HBAs have the same BIOS version? -

Help needed - Expand Xpenology (DSM7) beyond x drives

smileyworld replied to smileyworld's topic in The Noob Lounge

There also seems to be a known issue with duplicate drives which is documented even by broadcom itself. Interestingly this is also a bad firmware problem. https://www.broadcom.com/support/knowledgebase/1211161495925/flash-upgrading-firmware-on-lsi-sas-hbas-upgraded-firmware-on-92 -

Help needed - Expand Xpenology (DSM7) beyond x drives

smileyworld replied to smileyworld's topic in The Noob Lounge

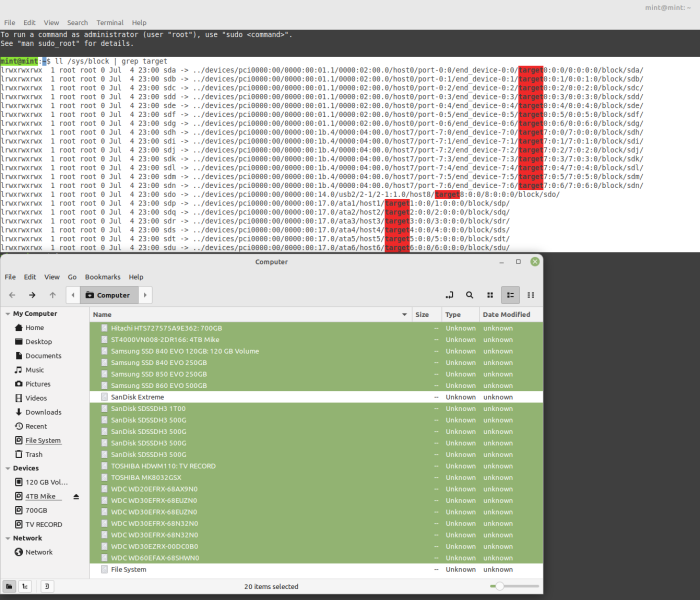

@Peter Suh I am not so sure anymore that it has solely to do with tcrp or DSM7. I tried plugging in 22 drives and even in mint only 20 are recognized. Maybe there is also an issue with the HBA or a cable. I also saw during boot that there my two HBAs are on different BIOSes. My plan is to identify the missing drives first and then changing the drives to different slots, so I can be sure all drives are ok. Next thing will be to upgrade firmware of my HBAs. If that doesnt help, than i really dont know where to look even further. -

Help needed - Expand Xpenology (DSM7) beyond x drives

smileyworld replied to smileyworld's topic in The Noob Lounge

Yes that was me and I checked it. I will report back tomorrow. Maybe I found something which would explain the behavior, but I want to double check first.