Maxhawk

Member-

Posts

23 -

Joined

-

Last visited

Recent Profile Visitors

The recent visitors block is disabled and is not being shown to other users.

Maxhawk's Achievements

Junior Member (2/7)

0

Reputation

-

The numbers match the "Utilization" figure in Resource Monitor. I did look in Task Manager at the top memory users in the Services and Processes tab and I didn't see any one thing that was using up gigabytes of memory. The highest users were VMware Tools and snmpd, and they still are after restart. For future debug, I've saved screenshots of the Services and Processes tab for comparison later when the memory usage creeps up. FWIW, here the metric I'm using (that precisely matches the utilization per Resource Monitor): SELECT ((last("memTotalReal") -last("memAvailReal")-last("memBuffer")-last("memCached"))/last("memTotalReal"))

-

Maxhawk started following Anyone try Docker DDSM in an ESXi installation? , Memory leak/usage , Anyone tried Aquantia NIC with XPE in ESXi? and 1 other

-

I have DSM 6.1.7 15284 installed with Jun 1.02b in an ESXi 6.5 VM. It's been running for over 1.5 years with little issue. One time in the past, I was not able to access the shared folders in Windows. I found the DSM GUI to be unresponsive and noticed that my memory utilization was >90%. (I use Grafana/Telegraf to gather stats on my network). After restarting DSM everything worked normally and memory utilization was back to "normal" (<10%). At the time I had allocated 8GB of RAM to the VM so I decided to double the allocation to 16GB. Fast forward to this week and I noticed that the memory utilization was increasing over time. I only capture a 30 day window of data, but you can see in the following graph that it went from around 15% to 45% in 30 days. I decided to restart DSM and the utilization dropped to 5%. https://i.imgur.com/SJMIagJh.png Has anyone else experienced memory usage creep like this? I have the following packages running in DSM: DDSM in docker, Active Backup for Business, Drive, Hyper Backup, Hyper Backup Vault, Log Center, Moments, Replication Service, Snapshot Replication, Universal Search, VMware Tools, and VPN Server. In the future as the memory usage continues to go up, is there a command I can run (CLI) that will tell me what's using the memory? I've tried htop and top but it wasn't obvious what was using the memory as the top users were only in the 100s of MB range. At the end of the day I can always manually reboot as memory usage rises, but it sure would be nice to find the root cause to prevent it from occurring in the first place.

-

Thanks for the suggestion, but as I recall, 1.03B with 6.2.x doesn't support 10 Gbe since there is no VMXNET3 driver. Or has there been advancements since I tried it a year ago?

-

I've got ESXi 6.7U3 running on a Dell R510. The NICs include: (1) the built-in dual Broadcom 1Gbe ports, which work as expected, and (2) an Aquantia AQN-107 Nbase-T PCIe card which is not playing nicely with XPE. XPE uses loader 1.02b with DS3615xs 6.1.7 Update 3. The network is set up as VMXNET3 which is required to support 10 Gbe. I have the Aquantia driver package installed in ESXi. ESXi reports it as a 10000 Mbps connection. I have an Ubuntu VM and an iperf test to it comes back at 10Gbit wire speed (940 Gbps) so I know ESXi and the NIC are working properly. With the AQN-107 I'm able to access the XPE GUI. The XPE network control panel reports a 10000 Mbps connection. However an iperf test fails and copying a large file from Windows (SMB) fails. Both tests work fine when I configure the VM to use the built-in Broadcom NIC. My thought is that since ESXi is providing a "virtual" network connection to XPE, no additional drivers should be necessary in the XPE loader. Any suggestions on what to try next? I have two other ESXi boxes (R720xd & R310) running XPE 6.1.7 U3 that both use a Mellanox ConnectX-2 card and they both run at 10 Gbit speeds with no issue. I want to use the Aquantia card in the R510 because I have extra copper ports available but no more SFP+ ports available.

-

I finally got it working! It turned out that none of the commands in set sata_args were being executed. I had to move DiskIdxMap, SataPortMap, and SasIdxMap to common_args_3615. Once I did that, I was able to figure out by trial and error that DSM sees 3 controllers. The trick that worked was to set DiskIdxMap=0C0D. This also resulted in proper numbering of the data drives starting with Drive 1. I think setting DiskIdxMap=0C0D00 would be more proper and would have worked too. I left SataPortMap=1 and SasIdxMap=0.

-

DSM version: 3615xs 6.1.7-15284 Update 3 Jun bootloader 1.02b Hardware: Dell 720xd with H310 mini (for my VMs) and PCIe H310 flashed to IT Software: VMWare ESXi 6.5U1 with PCIe H310 passed to Xpenology ---- I need some assistance with properly setting sata_args in synoboot.img in a virtual environment. I've had Xpenology running smoothly for a year and half as a VM on my Dell R720xd. The chassis holds 12 drives and for quite a while I've run with two volumes: (1) 8x8TB RAID10 and (2) 2x8TB RAID0. Other than setting the MAC address and S/N in grub.cfg I left everything "stock". Very recently I decided I wanted to fill the last 2 empty slots with a couple more 8TB drives and make a third volume. When I did that DSM reported that my RAID10 array had degraded and one of my original 8TB drives was missing, reporting only 11 drives. After a lot of trial and error, I determined that if there are 11 drives installed, DSM is OK. When a 12th drive is installed, I lose one of the originals. Am I running to an issue where my boot drive + 11 = 12 maximum drives? In ESXi, I have one drive defined which is the boot drive. This shows up as a SATA drive in DSM's dmesg. My pass-through drives all show up as SCSI drives. In dmesg I can see that the 12th disk is recognized (/dev/sdas), but the drive doesn't show up in DSM. My sata_args is as follows: set sata_args='sata_uid=1 sata_pcislot=5 synoboot_satadom=1 DiskIdxMap=0C SataPortMap=1 SasIdxMap=0' I played around with DiskIdxMap and SataPortMap and changes made zero difference. I found that DSM sees 32 SATA devices which means SataPortMap=1 wasn't doing anything. I found a thread where someone said that moving it to common_args_3615 would make the system recognize it. When I did that, the disk numbering drastically changed (my first data drive starts at drive 34 and it went to drive 2) but DSM could only see 6 drives and indicated my RAID10 volume was crashed. Does anyone have any ideas on what I can try next? Perhaps move DiskIdxMap to common_args_3615? I also tried modifying synoinfo.conf (usbportconfig, esataportconfig, internalportcfg, and maxdisks) but this made no difference. At this point even with the original synoboot.img without the missing drive, the volume is degraded and I'm going to have to do a repair to get it back to normal. The drive that was missing now says Initialized, Normal. I'm afraid to to do any changes that may cause more drives to drop from the array forcing me to restore everything from a backup. Thanks for any help.

-

- Outcome of the update: SUCCESSFUL - DSM version prior update: DSM 6.2.1-23824 Update 4 - Loader version and model: JUN'S LOADER v1.03b - DS3615xs - Using custom extra.lzma: NO - Installation type: VM - ESXi 6.5U2 Dell R310 - Additional comments: none

-

Hardware and overall system/software topology questions

Maxhawk replied to Maxhawk's topic in The Noob Lounge

Sorry I've not. -

Hardware and overall system/software topology questions

Maxhawk replied to Maxhawk's topic in The Noob Lounge

I've had my R720xd since mid January and have had Xpenology running on ESXi since then. I've had zero issues and I'm very happy with the setup. I'm using the H310 mini to control two 2½" SSD drives in rear flex bays for VM storage and an IT-flashed H310 for the front 12-bays. The H310 is passed through in ESXi so Xpenology can control the drives directly, with access to the SMART data and temperature readings. I can't comment on whether this would be an upgrade to your Supermicro. I can only say that everything has been 100% stable with 9 total VMs and it seems I'm barely taxing my dual Xeon 2630L CPUs. -

- Outcome of the update: SUCCESSFUL - DSM version prior update: DSM 6.1.6-15266 - Loader version and model: Jun's Loader v1.02b - DS3615xs - Using custom extra.lzma: NO - Installation type: BAREMETAL - Gigabyte GA-P35-DS3R - Additional comments: NO REBOOT REQUIRED

-

Anyone try Docker DDSM in an ESXi installation?

Maxhawk replied to Maxhawk's topic in Synology Packages

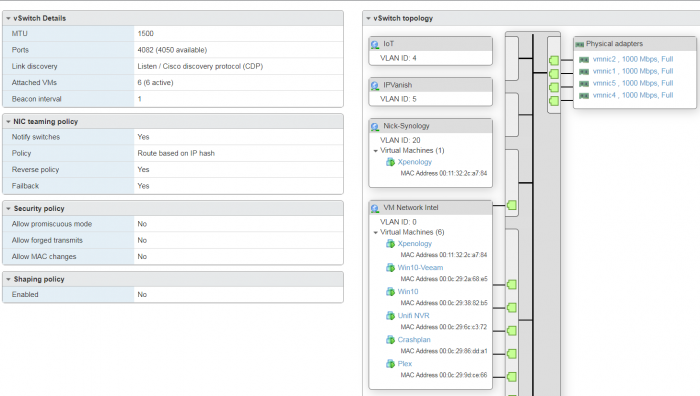

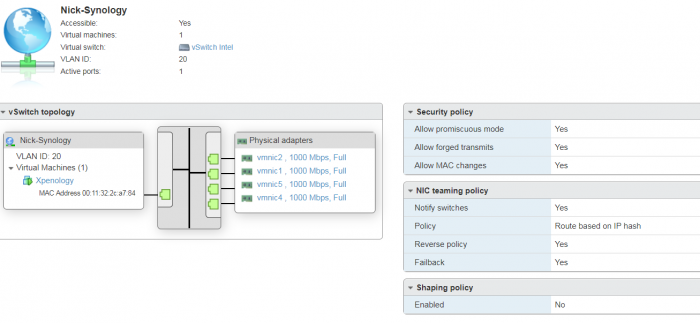

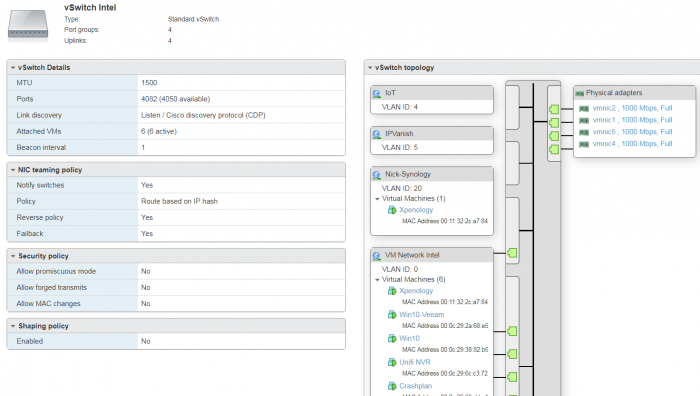

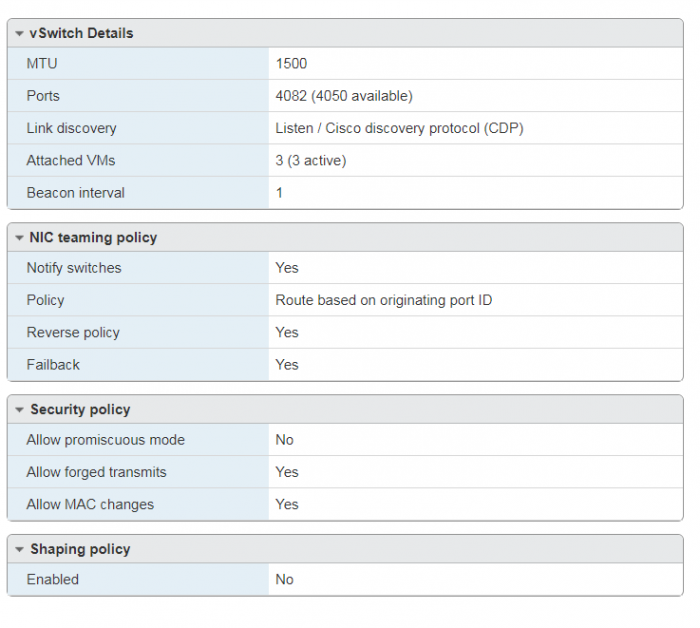

The DDSM network is called Nick-Synology. The only other changes I made to the default was to change NIC teaming to Route based on IP hash which should not affect this. I cant remember if I made changes to the security policy of the default vSwitch0 for the managment network, but I see that it's not all set to No FWIW. This switch is on a separate NIC port from vSwitch Intel. -

Anyone try Docker DDSM in an ESXi installation?

Maxhawk replied to Maxhawk's topic in Synology Packages

Thanks this worked! I know I tried one of those to Yes but not all three. Also it turns out you don't have to turn these on for the entire vSwitch. I did it only on the port group where I specified the VLAN ID and DDSM got an IP address first try. -

- Outcome of the update: SUCCESSFUL - DSM version prior update: DSM 6.1.5-15254 Update 1 - Loader version and model: JUN'S LOADER v1.02b - DS3615xs - Using custom extra.lzma: NO - Installation type: VM - ESXi 6.5 U1 - Additional comments: REBOOT REQUIRED

-

- Outcome of the update: SUCCESSFUL - DSM version prior update: DSM 6.1.5-15254 Update 1 - Loader version and model: JUN'S LOADER v1.02b - DS3615xs - Using custom extra.lzma: NO - Installation type: BAREMETAL - (Gigabyte GA-P35-DS3R) - Additional comments: REBOOT REQUIRED, manual update with previously downloaded file

-

I'm trying to install a second instance of DSM by using DDSM in Docker in an ESXi environment. The reason I can't spin up another XPE VM instance is because all 12 of my drive bays on the backplane are being passed through by an H310 controller (flashed IT mode=HBA). The issue I'm having with the ESXi VM instance is I can't get network connectivity on the DDSM. I've tried DDSM on a separate bare metal installation and it worked fine set up the same way. The only significant difference is one is bare metal while the other one is ESXi. On ESXi I've presented XPE with 2 virtual network cards on different subnets. DSM gets an IP address from both subnets but DDSM can talk to neither of them. This is an ESXi issue, but I can't exactly go asking on a Synology forum about use with ESXi. I was just hoping that someone here may have done it so I know it's possible. Everything looks right as configured in ESXi. Thanks for reading.