aniel

-

Posts

58 -

Joined

-

Last visited

Posts posted by aniel

-

-

when i said i found a 6.2.3 modded one i was referring to this post, i just didn't realized

-

i was able to find a 6.2.3 mbr mod one but my ethernet nic wasn't getting pick up while the original jun (gpt) does. regardless what am trying to do is to see if it is possible to run the bootloader in the same data disk, i have tried many times but i can't get it to work and i have used like 5 or more different tools including MiniTool Partition Wizard. here is the link i found and was using as reference:

-

how can the gpt img be converted to mbr ?

-

does this works with 6.2.2/6.2.3(jun) and dsm 7(redpill) ?

-

can we use/go beyond 24 hdds ?

-

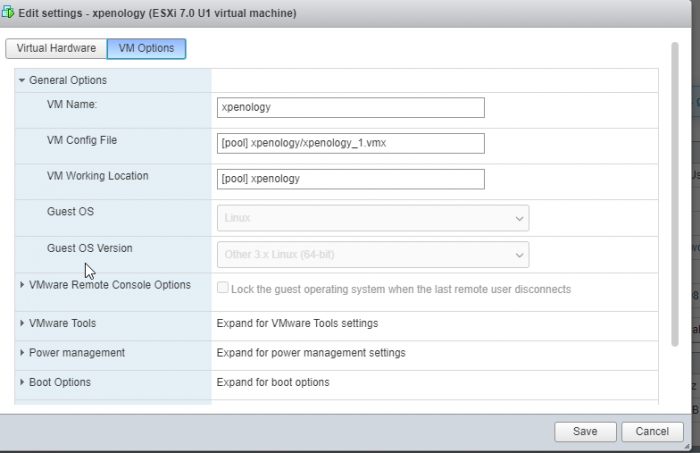

is this stable enough to run in esxi with live/important data? and can it run dsm 7 ?

-

1

1

-

-

i did tried to replicate it using this link but it does like it when i go beyond 24 disk

-

@IG-88 am confused are u saying we can go beyond 24 disk with jun loader if yes how? and yes i will give quicknick's a try.

-

@merve04 i have seem the video but i cant get it to work with jun loader. i also wonder how stable it is if this can indeed be made to work with jun loader.

-

-

On 1/23/2021 at 4:17 PM, IG-88 said:

is it possible to go beyond 24 disk with jun loader, i have tried it but it goes into recovery with the ability to recover?

-

On 1/23/2021 at 5:19 AM, IG-88 said:

yes and to help other people we usually document it by telling what the reason was

maybe (rare case, i know) someone tries to use the forum search (or another search engine like google)

my apologies i didn't do it on purpose. anyways here is the fix:

-

1

1

-

-

On 11/12/2020 at 5:51 AM, bearcat said:

@aniel Some more info on what loader, DSM version and your hardware would help us to help you

i was able to figure it out, kinda

-

3 hours ago, flyride said:

Did you try and install FixSynoboot?

yes sir (i will share some screenshot later on) and i also followed this comment of yours

-

my grub.cfg looks like this but i keep getting corrupted file when trying to update or reinstall. any help will be appreciated.

#set extra_args_3617='earlycon=uart8250,io,0x3f8,115200n8 earlyprintk loglevel=15' set extra_args_3617='' set common_args_3617='syno_hdd_powerup_seq=0 HddHotplug=0 syno_hw_version=DS3617xs vender_format_version=2 console=ttyS0,115200n8 withefi elevator=elevator quiet syno_port_thaw=1' set sata_args='sata_uid=1 sata_pcislot=5 synoboot_satadom=1 DiskIdxMap=0C SataPortMap=1 SasIdxMap=0' -

i know this is a old post but is it possible to go beying 24 hdd ?

-

@merve04 is it possible to go beying 24 hdd ?

-

On 9/27/2018 at 2:55 PM, Kanedo said:

The proper way to move synoboot to a much higher enumeration is to change DiskIdxMap in grub.cfg

On jun's 1.03b, the default is DiskIdxMap=0C

0C = Disk 13

Change it to something much higher

DiskIdxMap=1F

1F = Disk 32

For more info: https://github.com/evolver56k/xpenology/blob/master/synoconfigs/Kconfig.devices#L245

is i use this method then i can not update the system

-

On 10/20/2020 at 10:38 AM, Warlock928 said:

is anyone using quicknicks loader?? i see nothing has been posted in nearly 3 years

where u able to use and use more than 24 drives ?

-

-

On 1/11/2021 at 6:46 AM, boghea said:

VMXNET3 working only with CPU IOMMU disabled (VMware CPU option)

Hello all,

For everyone that is interested in using 10GB VMXNET3 network adapter - must be in addition to an Intel E1000E because you will not be able to install/update

For example, my setup consists of one E1000E - used for normal access of Synology services and 2 VMXNET3 NIC's used for iSCSI/NFS access to my ESXi hosts. I have a 2 port 10GB physical NIC on the ESXi.

You can use perfectly fine VMXNET3, even with DirectPath I/O checked, as long as you DON'T enable CPU IOMMU - this took a while to figure out and I hope this will help someone in a similar scenario

Please post this information where is best suited.

many thanks, this fixed it for me.

-

@IG-88 @flyride any update ?

-

-

2 hours ago, IG-88 said:

in theory he tried it with dsm 6.2.3 and that should be working (in theory)

yes am using 6.2.3 update 2 which was working with vmxnet3 before i discovered the issue that am referring to in this port, the thing is that i cannot get it to work again, but either way am not sure what i'm doing wrong or what i have misconfigured that it is working for others but not for me.

this is on me.

in General Questions

Posted

Between me trying to get redpill running on bare metal and going back to esxi and ignorantly rebooting vm, i believe i have damaged my volume. My question is, can this be repaired or do i need to try get the data off and rebuild the volume ?

Here are some of the commands i have run so far and thank you in advance.

root@xpe_ds3617:~# btrfs scrub start -Bd /dev/sdxy ERROR: cannot check /dev/sdxy: No such file or directory ERROR: '/dev/sdxy' is not a mounted btrfs device root@xpe_ds3617:~# ls root@xpe_ds3617:~# diskutil list -ash: diskutil: command not found root@xpe_ds3617:~# root@xpe_ds3617:~# df Filesystem 1K-blocks Used Available Use% Mounted on /dev/md0 2385528 1869756 396988 83% / devtmpfs 9172180 0 9172180 0% /dev tmpfs 9208580 4 9208576 1% /dev/shm tmpfs 9208580 19712 9188868 1% /run tmpfs 9208580 0 9208580 0% /sys/fs/cgroup tmpfs 9208580 628 9207952 1% /tmp /dev/mapper/cachedev_0 60907333880 40438417680 20468916200 67% /volume1 root@xpe_ds3617:~# btrfs scrub start -Bd /volume1 ERROR: scrubbing /volume1 failed for device id 1: ret=-1, errno=30 (Read-only file system) scrub device /dev/mapper/cachedev_0 (id 1) canceled scrub started at Sat Nov 26 03:35:29 2022 and was aborted after 00:00:00 total bytes scrubbed: 0.00B with 0 errors root@xpe_ds3617:~# sudo mount /dev/vg1000/lv /volume1 mount: /volume1: special device /dev/vg1000/lv does not exist. root@xpe_ds3617:~# root@xpe_ds3617:~# cat /etc/fstab none /proc proc defaults 0 0 /dev/root / ext4 defaults 1 1 /dev/mapper/cachedev_0 /volume1 btrfs auto_reclaim_space,ssd,synoacl,relatime,ro,nodev 0 0 root@xpe_ds3617:~# root@xpe_ds3617:~# lvdisplay -v Using logical volume(s) on command line. --- Logical volume --- LV Path /dev/shared_cache_vg1/syno_vg_reserved_area LV Name syno_vg_reserved_area VG Name shared_cache_vg1 LV UUID tRSFsV-Qals-pzTO-YlfN-OJ41-32Sf-Nids7A LV Write Access read/write LV Creation host, time , LV Status available # open 0 LV Size 12.00 MiB Current LE 3 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 384 Block device 249:0 --- Logical volume --- LV Path /dev/shared_cache_vg1/alloc_cache_1 LV Name alloc_cache_1 VG Name shared_cache_vg1 LV UUID SxlqfX-E9FV-RgAG-Lu9r-Kfg2-D8t8-tmztR4 LV Write Access read/write LV Creation host, time , LV Status available # open 1 LV Size 465.00 GiB Current LE 119040 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 384 Block device 249:1 --- Logical volume --- LV Path /dev/vg1/syno_vg_reserved_area LV Name syno_vg_reserved_area VG Name vg1 LV UUID OcLQa4-U13U-Hgoi-cE2g-A1hv-b39X-cXJP5J LV Write Access read/write LV Creation host, time , LV Status available # open 0 LV Size 12.00 MiB Current LE 3 Segments 1 Allocation inherit Read ahead sectors auto - currently set to 2304 Block device 249:2 --- Logical volume --- LV Path /dev/vg1/volume_1 LV Name volume_1 VG Name vg1 LV UUID GDViyC-hLT0-FOwK-xQ02-NkBe-55Kf-1ljUle LV Write Access read/write LV Creation host, time , LV Status available # open 1 LV Size 59.09 TiB Current LE 15489536 Segments 8 Allocation inherit Read ahead sectors auto - currently set to 2304 Block device 249:3 root@xpe_ds3617:~# root@xpe_ds3617:~# sudo vgchange -ay 2 logical volume(s) in volume group "shared_cache_vg1" now active 2 logical volume(s) in volume group "vg1" now active root@xpe_ds3617:~# root@xpe_ds3617:~# sudo mount /dev/vg1000/lv /volume1 mount: /volume1: special device /dev/vg1000/lv does not exist. root@xpe_ds3617:~# root@xpe_ds3617:~# sudo mount /dev/vg1/lv /volume1 mount: /volume1: special device /dev/vg1/lv does not exist. root@xpe_ds3617:~# sudo mount /dev/vg1000/lv /volume1 mount: /volume1: special device /dev/vg1000/lv does not exist. root@xpe_ds3617:~# sudo mount /dev/vg1/lv /volume1 mount: /volume1: special device /dev/vg1/lv does not exist. root@xpe_ds3617:~# sudo vgchange -ay 2 logical volume(s) in volume group "shared_cache_vg1" now active 2 logical volume(s) in volume group "vg1" now active root@xpe_ds3617:~# root@xpe_ds3617:~# sudo mount /dev/vg1/lv /volume_1 mount: /volume_1: mount point does not exist. root@xpe_ds3617:~# sudo mount -o clear_cache /dev/vg1/lv /volume_1 mount: /volume_1: mount point does not exist. root@xpe_ds3617:~# sudo mount /dev/vg1/lv /volume1 mount: /volume1: special device /dev/vg1/lv does not exist. root@xpe_ds3617:~# sudo mount -o clear_cache /dev/vg1/lv /volume_1 mount: /volume_1: mount point does not exist. root@xpe_ds3617:~# sudo mount -o clear_cache /dev/vg1/lv /volume1 mount: /volume1: special device /dev/vg1/lv does not exist. root@xpe_ds3617:~# sudo mount -o recovery /dev/vg1/lv /volume1 mount: /volume1: special device /dev/vg1/lv does not exist. root@xpe_ds3617:~# sudo mount -o recovery /dev/vg1/lv /volume_1 mount: /volume_1: mount point does not exist. root@xpe_ds3617:~# sudo mount -o recovery,ro /dev/vg1/lv /volume_1 mount: /volume_1: mount point does not exist. root@xpe_ds3617:~# sudo mount -o recovery,ro /dev/vg1/lv /volume1 mount: /volume1: special device /dev/vg1/lv does not exist. root@xpe_ds3617:~# sudo btrfs rescue super /dev/vg1/lv ERROR: mount check: cannot open /dev/vg1/lv: No such file or directory ERROR: could not check mount status: No such file or directory root@xpe_ds3617:~#