finally i've overcome myself to write my problem down. Hope you guys can help me.

i have the DS918+ modell with DSM version 6.2-23739. I am using 8x4TB WD Reds plugged in the mainboard and 2x4TB WD Reds + 2 SSDs on a HBA controller.

I use 8 WD Reds as a SHR with 1-disk worth of redundancy. Also i used the SSD Cache with 2 128GB SSDs.

Now to my problem...

I wanted to upgrade my SHR from 8x4TB to 10x4TB.

since its not the first time adding new disks to the SHR i thougt its a good idea to add the 2 disks simultaneously (maybe this was the first step of my error series)

first it seemed everything right but the reshape was extremely slow (cat /proc/mdstat said it would take 140 days to complete), after a few researches i changed

the stripe cache size to the maximum of 16384.

it worked instantly and cat /proc/mdstat said it would take less then a week. at about 40% the NAS suddenly was not reachable over IP or network, putty also didnt worked.

after one day it was still not reachable so i restarted the NAS completely (yes i cut power and restarted). after rebooting the reshape progress went on from ca. 35% and i just changed the stripe cache size again so it would be faster. Also during the reshaping i used the NAS normaly, opened a few files and copy/paste stuff, but performance was very slow, in the end files copied with just 1MB/sec. So maybe a week later it was at about 95% and the next day i looked into the DSM it said my Volume crashed.. The Log said that both 2 new drives had an error close before finishing the reshape (dont know which error and i dont have screenshots). SMART tests of both 2 new 4TB drives are okay. the Volume was still reachable through network but most of the files where corrupt and not readable. the Repair function of the volume didnt worked. after clicking on repair nothing happened. so i restarted the NAS and since 16 days its still rebooting and i dont know what to do. htop says that the volume is reading at 160M/sec since 16 days! what is it reading??

i hope you guys can help me fixing this and help to restore my volume!

Thanks for helping

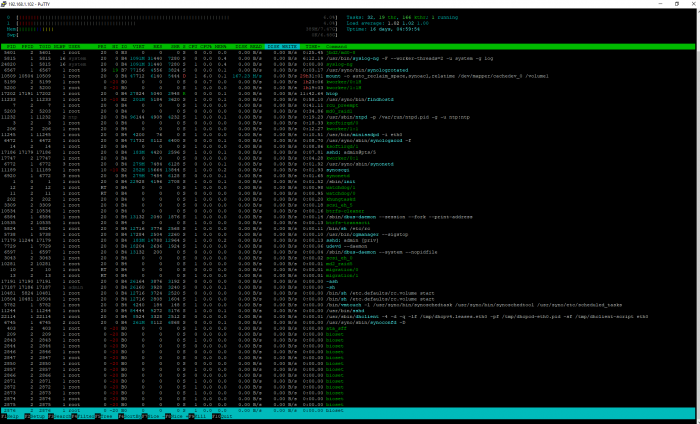

putty screenhot here: (jbd2/md0-8, /usr/bin/syslog, /synologrotated are writing every once in while a few bytes)

Question

JigglyJoe

Hey Guys,

finally i've overcome myself to write my problem down. Hope you guys can help me.

i have the DS918+ modell with DSM version 6.2-23739. I am using 8x4TB WD Reds plugged in the mainboard and 2x4TB WD Reds + 2 SSDs on a HBA controller.

I use 8 WD Reds as a SHR with 1-disk worth of redundancy. Also i used the SSD Cache with 2 128GB SSDs.

Now to my problem...

I wanted to upgrade my SHR from 8x4TB to 10x4TB.

since its not the first time adding new disks to the SHR i thougt its a good idea to add the 2 disks simultaneously (maybe this was the first step of my error series)

first it seemed everything right but the reshape was extremely slow (cat /proc/mdstat said it would take 140 days to complete), after a few researches i changed

the stripe cache size to the maximum of 16384.

it worked instantly and cat /proc/mdstat said it would take less then a week. at about 40% the NAS suddenly was not reachable over IP or network, putty also didnt worked.

after one day it was still not reachable so i restarted the NAS completely (yes i cut power and restarted). after rebooting the reshape progress went on from ca. 35% and i just changed the stripe cache size again so it would be faster. Also during the reshaping i used the NAS normaly, opened a few files and copy/paste stuff, but performance was very slow, in the end files copied with just 1MB/sec. So maybe a week later it was at about 95% and the next day i looked into the DSM it said my Volume crashed.. The Log said that both 2 new drives had an error close before finishing the reshape (dont know which error and i dont have screenshots). SMART tests of both 2 new 4TB drives are okay. the Volume was still reachable through network but most of the files where corrupt and not readable. the Repair function of the volume didnt worked. after clicking on repair nothing happened. so i restarted the NAS and since 16 days its still rebooting and i dont know what to do. htop says that the volume is reading at 160M/sec since 16 days! what is it reading??

i hope you guys can help me fixing this and help to restore my volume!

Thanks for helping

putty screenhot here: (jbd2/md0-8, /usr/bin/syslog, /synologrotated are writing every once in while a few bytes)

.

Edited by JigglyJoeLink to comment

Share on other sites

4 answers to this question

Recommended Posts

Join the conversation

You can post now and register later. If you have an account, sign in now to post with your account.