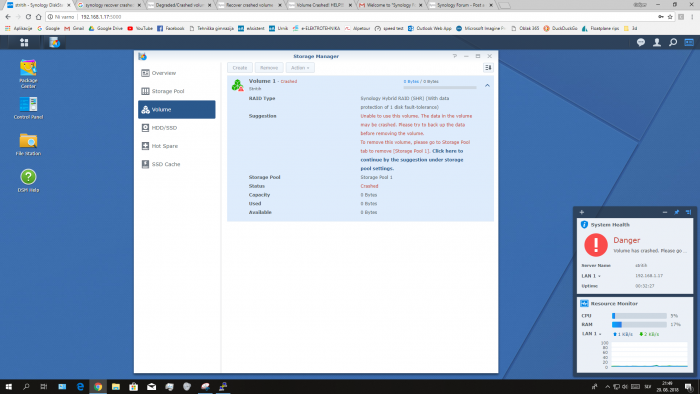

Gapy10 Posted August 25, 2018 Share #1 Posted August 25, 2018 (edited) Hi I am using synology hybrid RAID with 5 drives. 4 are working fine, but 1 is failing. My volume is crashed. I know that SHR has 1 disk tolerance so it should recover if only 1 drive failed but i cannot recover it. Please help me. Edited August 26, 2018 by Polanskiman Edited title to conform to rules & guidelines. Read them. Quote Link to comment Share on other sites More sharing options...

0 flyride Posted August 26, 2018 Share #2 Posted August 26, 2018 How did the array look before the crash? Right now DSM seems confused as to the construction of the original array and your drives are in an odd order. Did you rearrange the drives or remove drives from the array in an attempt to fix it? SSH into your box and perform a $ cat /proc/mdstat Post the results here. Quote Link to comment Share on other sites More sharing options...

0 Gapy10 Posted August 26, 2018 Author Share #3 Posted August 26, 2018 Yes. In an attempt of fixing it I physically unplugged all the drives from motherboard and plug them back in in defferent order. Quote Link to comment Share on other sites More sharing options...

0 flyride Posted August 27, 2018 Share #4 Posted August 27, 2018 I'm sorry but you are in a bad way now, and your actions thus far have probably made things more difficult to recover. There is not a step-by-step method of fixing your problem at this point. However, depending on how much time and energy you want to devote to learning md and lvm, you might be able to retrieve some data from the system. I have a few thoughts that may help you, and also explain the situation for others: A RAID corruption like this is where SHR makes the problem much harder to resolve. If this were a RAID1, RAID5, RAID6 or RAID10, the solution would be easier. Personally, I think about this when building a system and selecting the array redundancy strategy. MDRAID writes a comprehensive superblock containing information about the entire array to each member partition on each drive. When the array is healthy, you can move drives around without much of a complaint from DSM. When the array is broken, moving drives can cause an array rebuild to fail. You should restore the drive order to what it was before the crash prior to doing anything else. /proc/mdstat reports four arrays in the system. /dev/md0 is the OS array and is healthy. /dev/md1 is the swap array and is healthy. These are multi-member RAID1's so they will not degrade when a drive is missing from the system. However, it's odd that the missing member in each of these arrays is out of order, and the numbering does not match between the arrays. I believe /dev/md2 and /dev/md3 are the two arrays that make up the SHR. Each are reporting only 1 partition present (should be 4 in /dev/md2, and 3 in /dev/md3), which is why everything is crashed. When the drives are in the correct physical order, hopefully /dev/md2 and /dev/md3 will show up in a critical (but functional) state instead of crashed. If so, you just need to replace your bad drive, and repair your array. If you still see missing member partitions from /dev/md2 and /dev/md3, you may want to try to stop those arrays and force assemble them. The system can scan the superblocks and guess which partitions belong to which arrays, or you can manually specify them. Again, there isn't a step-by-step method for this as it will depend on what /proc/mdstat and individual partition superblock dumps tell you at that point. You might start by reviewing the recovery examples in this thread here and mdadm administrative information here. If you are successful in getting your arrays started, you might end up with the situation where the volume is accessible from the command line but not visible in DSM or via network share. In that case, you can get data off by copying directly from /volume1/<share> to an external drive. If that works, I suggest copy data off, delete your volume and storage pool, recreate, and copy your data back on. I wish I could be of more help, but this is pretty far down the rabbit hole. Take your time, and good luck to you. 2 Quote Link to comment Share on other sites More sharing options...

Question

Gapy10

Hi

I am using synology hybrid RAID with 5 drives. 4 are working fine, but 1 is failing. My volume is crashed.

I know that SHR has 1 disk tolerance so it should recover if only 1 drive failed but i cannot recover it.

Please help me.

Edited by PolanskimanEdited title to conform to rules & guidelines. Read them.

Link to comment

Share on other sites

3 answers to this question

Recommended Posts

Join the conversation

You can post now and register later. If you have an account, sign in now to post with your account.