petersnows

Transition Member-

Posts

16 -

Joined

-

Last visited

Recent Profile Visitors

The recent visitors block is disabled and is not being shown to other users.

petersnows's Achievements

Newbie (1/7)

0

Reputation

-

Decided to use the DVA1622 and ARPL. (with 4 HD, and 2 nics) Probably was getting this error because my machine (HP Micro Gen8, ESXI6.5) is not supported by DVA3221. Maybe Because does not have the FMA ...

-

I believe I found it https://gugucomputing.wordpress.com/2018/11/11/experiment-on-sata_args-in-grub-cfg/

- 89 replies

-

- virtualization

- tcrp

-

(and 2 more)

Tagged with:

-

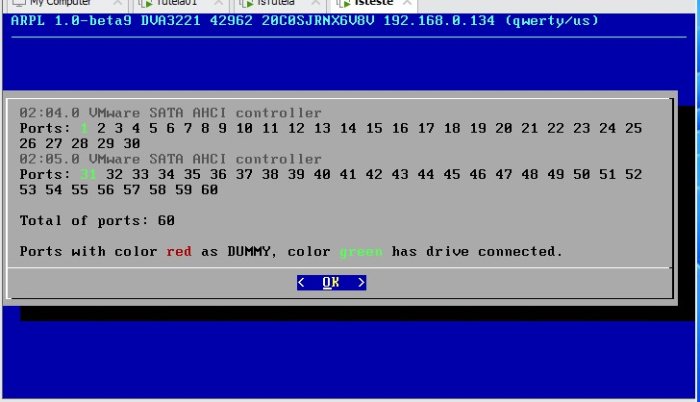

Is there a place that explains SataPortMap , DiskIdxMap and sata_remap ? I have read so many configuration types, in the forums, and still don't understand how to configure this variables. I have a vmware guest and I am using ARPL. I have the arpl.vmdk disk on sata0:0 And a data drive on sata1:0

- 89 replies

-

- virtualization

- tcrp

-

(and 2 more)

Tagged with:

-

Hello I am not able to build a red-pill on ESXI 6.5: ./rploader.sh update ./rploader.sh full upgrade ./rploader.sh serialgen DVA3221 now ./rploader.sh satamap now ./rploader.sh build dva3221-7.0.1-42218 What am I doing wrong ? I would appreciate any help. I don't have a graphics card, but I don't need one, since I don't need the AI features. I get this error: [#] Creating loader image at loader.img... [ERR] [!] Failed to copy /home/tc/redpill-load/config/_common/bzImage to /home/tc/redpill-load/build/1671155955/img-mnt/part-2/bzImage /usr/local/bin/cp: cannot stat '/home/tc/redpill-load/config/_common/bzImage': No such file or directory The full msgs tc@box:~$ ./rploader.sh build dva3221-7.0.1-42218 Rploader Version : 0.9.3.0 Loader source : https://github.com/pocopico/redpill-load.git Loader Branch : develop Redpill module source : https://github.com/pocopico/redpill-lkm.git : Redpill module branch : master Extensions : redpill-misc Extensions URL : "https://github.com/pocopico/redpill-load/raw/develop/redpill-misc/rpext-index.json" TOOLKIT_URL : https://sourceforge.net/projects/dsgpl/files/toolkit/DSM7.0/ds.denverton-7.0.dev.txz/download TOOLKIT_SHA : 6dc6818bad28daff4b3b8d27b5e12d0565b65ee60ac17e55c36d913462079f57 SYNOKERNEL_URL : https://sourceforge.net/projects/dsgpl/files/Synology%20NAS%20GPL%20Source/25426branch/denverton-source/linux-4.4.x.txz/download SYNOKERNEL_SHA : d3e85eb80f16a83244fcae6016ab6783cd8ac55e3af2b4240455261396e1e1be COMPILE_METHOD : toolkit_dev TARGET_PLATFORM : dva3221 TARGET_VERSION : 7.0.1 TARGET_REVISION : 42218 REDPILL_LKM_MAKE_TARGET : dev-v7 KERNEL_MAJOR : 4 MODULE_ALIAS_FILE : modules.alias.4.json SYNOMODEL : dva3221_42218 MODEL : DVA3221 Local Cache Folder : /mnt/sda3/auxfiles DATE Internet : 16122022 Local : 15122022 ERROR ! System DATE is not correct Downloading ntpclient to assist Current time after communicating with NTP server pool.ntp.org : Fri Dec 16 01:58:47 UTC 2022 Checking Internet Access -> OK Checking if a newer version exists on the main repo -> Version is current Cloning into 'redpill-lkm'... remote: Enumerating objects: 1556, done. remote: Counting objects: 100% (628/628), done. remote: Compressing objects: 100% (235/235), done. remote: Total 1556 (delta 417), reused 549 (delta 380), pack-reused 928 Receiving objects: 100% (1556/1556), 5.75 MiB | 6.86 MiB/s, done. Resolving deltas: 100% (980/980), done. Cloning into 'redpill-load'... remote: Enumerating objects: 2983, done. remote: Counting objects: 100% (465/465), done. remote: Compressing objects: 100% (228/228), done. remote: Total 2983 (delta 239), reused 420 (delta 214), pack-reused 2518 Receiving objects: 100% (2983/2983), 118.51 MiB | 8.81 MiB/s, done. Resolving deltas: 100% (1464/1464), done. No extra build option or static specified, using default <static> Using static compiled redpill extension Removing any old redpill.ko modules Looking for redpill for : dva3221_42218 Getting file https://raw.githubusercontent.com/pocopico/rp-ext/master/redpillprod/releases/redpill-4.4.180plus-denverton.tgz Extracting module Getting file https://raw.githubusercontent.com/pocopico/rp-ext/master/redpillprod/src/check-redpill.sh Got redpill-linux-v4.4.180+.ko Testing modules.alias.4.json -> File OK ------------------------------------------------------------------------------------------------ It looks that you will need the following modules : Found IDE Controller : pciid 8086d00007111 Required Extension : ata_piix ata_piix Searching for matching extension for ata_piix Found VGA Controller : pciid 15add00000405 Required Extension : No matching extension [#] Checking runtime for required tools... [OK] [#] Adding new extension from https://raw.githubusercontent.com/pocopico/rp-ext/master/e1000/rpext-index.json... [#] Downloading remote file https://raw.githubusercontent.com/pocopico/rp-ext/master/e1000/rpext-index.json to /home/tc/redpill-load/custom/extensions/_new_ext_index.tmp_json ######################################################################## 100.0% [OK] [#] ========================================== pocopico.e1000 ========================================== [#] Extension name: e1000 [#] Description: Adds Intel(R) PRO/1000 Network Driver Support [#] To get help visit: <todo> [#] Extension preparer/packer: https://github.com/pocopico/rp-ext/tree/main/e1000 [#] Software author: https://github.com/pocopico [#] Update URL: https://raw.githubusercontent.com/pocopico/rp-ext/master/e1000/rpext-index.json [#] Platforms supported: ds1621p_42218 ds1621p_42951 ds918p_41890 dva3221_42661 ds3617xs_42621 ds3617xs_42218 ds920p_42661 dva3221_42962 ds918p_42661 ds3622xsp_42962 ds3617xs_42951 dva1622_42218 dva1622_42621 ds920p_42962 ds1621p_42661 dva1622_42951 ds918p_25556 dva3221_42218 ds3615xs_42661 dva3221_42951 ds3622xsp_42661 ds2422p_42661 ds3622xsp_42218 ds2422p_42962 rs4021xsp_42621 dva1622_42962 ds2422p_42218 rs4021xsp_42962 dva3221_42621 ds3615xs_42962 ds3617xs_42962 ds3615xs_41222 ds920p_42951 rs4021xsp_42218 ds2422p_42951 ds918p_42621 ds3617xs_42661 ds3615xs_25556 ds920p_42218 rs4021xsp_42951 ds920p_42621 ds918p_42962 ds3615xs_42951 ds3622xsp_42951 dva1622_42661 ds918p_42218 ds2422p_42621 ds1621p_42621 ds3615xs_42621 ds3615xs_42218 ds1621p_42962 ds3622xsp_42621 rs4021xsp_42661 [#] ======================================================================================= Found Ethernet Interface : pciid 8086d0000100f Required Extension : e1000 Searching for matching extension for e1000 Found matching extension : "https://raw.githubusercontent.com/pocopico/rp-ext/master/e1000/rpext-index.json" [#] Checking runtime for required tools... [OK] [#] Adding new extension from https://raw.githubusercontent.com/pocopico/rp-ext/master/e1000/rpext-index.json... [#] Downloading remote file https://raw.githubusercontent.com/pocopico/rp-ext/master/e1000/rpext-index.json to /home/tc/redpill-load/custom/extensions/_new_ext_index.tmp_json ######################################################################## 100.0% [!] Extension is already added (index exists at /home/tc/redpill-load/custom/extensions/pocopico.e1000/pocopico.e1000.json). For more info use "ext-manager.sh info pocopico.e1000" *** Process will exit *** Found Ethernet Interface : pciid 8086d0000100f Required Extension : e1000 Searching for matching extension for e1000 Found matching extension : "https://raw.githubusercontent.com/pocopico/rp-ext/master/e1000/rpext-index.json" Found SATA Controller : pciid 15add000007e0 Required Extension : No matching extension Found SATA Controller : pciid 15add000007e0 Required Extension : No matching extension ------------------------------------------------------------------------------------------------ Starting loader creation Found tinycore cache folder, linking to home/tc/custom-module Checking user_config.json : Done Entering redpill-load directory Removing bundled exts directories Cache directory OK cp: cannot stat '/home/tc/custom-module/*42218*.pat': No such file or directory Processing add_extensions entries found on custom_config.json file : redpill-misc Adding extension "https://github.com/pocopico/redpill-load/raw/develop/redpill-misc/rpext-index.json" [#] Checking runtime for required tools... [OK] [#] Adding new extension from https://github.com/pocopico/redpill-load/raw/develop/redpill-misc/rpext-index.json... [#] Downloading remote file https://github.com/pocopico/redpill-load/raw/develop/redpill-misc/rpext-index.json to /home/tc/redpill-load/custom/extensions/_new_ext_index.tmp_json ##################################################################################################################################################### 100.0% [OK] [#] ========================================== redpill-misc ========================================== [#] Extension name: Misc shell [#] Description: Misc shell [#] To get help visit: https://github.com/pocopico/redpill-load/raw/develop/redpill-misc [#] Extension preparer/packer: https://github.com/pocopico/redpill-load/raw/develop/redpill-misc [#] Software author: https://github.com/pocopico/redpill-load/raw/develop/redpill-misc [#] Update URL: https://github.com/pocopico/redpill-load/raw/develop/redpill-misc/rpext-index.json [#] Platforms supported: ds1621p_42218 ds1621p_42951 ds918p_41890 dva3221_42661 ds3617xs_42621 ds3617xs_42218 ds920p_42661 dva3221_42962 ds918p_42661 ds3622xsp_42962 ds3617xs_42951 ds920p_42962 ds1621p_42661 ds923p_42962 dva1622_42951 ds918p_25556 dva3221_42218 ds3615xs_42661 dva3221_42951 ds3622xsp_42661 ds2422p_42661 ds3622xsp_42218 ds2422p_42962 rs4021xsp_42621 dva1622_42962 sa6400_42962 ds2422p_42218 rs4021xsp_42962 dva3221_42621 ds3615xs_42962 ds3617xs_42962 ds3615xs_41222 ds920p_42951 rs4021xsp_42218 ds918p_42621 ds3617xs_42661 ds3615xs_25556 ds920p_42218 rs4021xsp_42951 ds920p_42621 ds918p_42962 ds3615xs_42951 ds3622xsp_42951 dva1622_42661 ds918p_42218 ds1621p_42621 ds3615xs_42621 ds3615xs_42218 ds1621p_42962 ds3622xsp_42621 rs4021xsp_42661 [#] ======================================================================================= Updating extension : redpill-misc contents for model : dva3221_42218 [#] Checking runtime for required tools... [OK] [#] Updating dva3221_42218 platforms extensions... [#] Downloading remote file https://github.com/pocopico/redpill-load/raw/develop/redpill-misc/recipes/universal.json to /home/tc/redpill-load/custom/extensions/_ext_new_rcp.tmp_json ##################################################################################################################################################### 100.0% [#] Filling-in newly downloaded recipe for extension redpill-misc platform dva3221_42218 [#] Downloading remote file https://github.com/pocopico/redpill-load/raw/develop/redpill-misc/releases/install.sh to /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/install.sh ##################################################################################################################################################### 100.0% [#] Verifying /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/install.sh file... [OK] [#] Downloading remote file https://github.com/pocopico/redpill-load/raw/develop/redpill-misc/releases/install-all.sh to /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/install-all.sh ##################################################################################################################################################### 100.0% [#] Verifying /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/install-all.sh file... [OK] [#] Downloading remote file https://github.com/tsl0922/ttyd/releases/download/1.6.3/ttyd.x86_64 to /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/ttyd ##################################################################################################################################################### 100.0% [#] Verifying /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/ttyd file... [OK] [#] Downloading remote file https://github.com/pocopico/redpill-load/raw/develop/redpill-misc/releases/install_rd.sh to /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/install_rd.sh ##################################################################################################################################################### 100.0% [#] Verifying /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/install_rd.sh file... [OK] [#] Downloading remote file https://github.com/pocopico/redpill-load/raw/develop/redpill-misc/releases/lrzsz.tar.gz to /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/lrzsz.tar.gz ##################################################################################################################################################### 100.0% [#] Verifying /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/lrzsz.tar.gz file... [OK] [#] Unpacking files from /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/lrzsz.tar.gz to /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/... [OK] [#] Successfully processed recipe for extension redpill-misc platform dva3221_42218 [#] Unpacking files from /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/lrzsz.tar.gz to /home/tc/redpill-load/custom/extensions/redpill-misc/dva3221_42218/... [OK] [#] Checking runtime for required tools... [OK] [#] Updating extensions... [#] Checking runtime for required tools... [OK] [#] Adding new extension from https://github.com/pocopico/rp-ext/raw/main/redpill-boot-wait/rpext-index.json... [#] Downloading remote file https://github.com/pocopico/rp-ext/raw/main/redpill-boot-wait/rpext-index.json to /home/tc/redpill-load/custom/extensions/_new_ext_index.tmp_json ##################################################################################################################################################### 100.0% [OK] [#] ========================================== redpill-boot-wait ========================================== [#] Extension name: RedPill Bootwait [#] Description: Simple extension which stops the execution early waiting for the boot device to appear [#] To get help visit: https://github.com/pocopico/rp-ext/redpill-boot-wait [#] Extension preparer/packer: https://github.com/pocopico/rp-ext/tree/main/redpill-boot-wait [#] Update URL: https://raw.githubusercontent.com/pocopico/rp-ext/master/redpill-boot-wait/rpext-index.json [#] Platforms supported: ds1621p_42218 ds1621p_42951 ds918p_41890 dva3221_42661 ds3617xs_42621 ds3617xs_42218 ds920p_42661 dva3221_42962 ds918p_42661 ds3622xsp_42962 ds3617xs_42951 ds920p_42962 ds1621p_42661 ds923p_42962 dva1622_42951 ds918p_25556 dva3221_42218 ds3615xs_42661 dva3221_42951 ds3622xsp_42661 ds2422p_42661 ds3622xsp_42218 ds2422p_42962 rs4021xsp_42621 dva1622_42962 ds2422p_42218 rs4021xsp_42962 dva3221_42621 ds3615xs_42962 ds3617xs_42962 ds3615xs_41222 ds920p_42951 rs4021xsp_42218 ds2422p_42951 ds918p_42621 ds3617xs_42661 ds3615xs_25556 ds920p_42218 rs4021xsp_42951 ds920p_42621 ds918p_42962 ds3615xs_42951 ds3622xsp_42951 ds920p_42550 dva1622_42661 ds918p_42218 ds2422p_42621 ds1621p_42621 ds3615xs_42621 ds3615xs_42218 ds1621p_42962 ds3622xsp_42621 rs4021xsp_42661 [#] ======================================================================================= [#] Checking runtime for required tools... [OK] [#] Updating pocopico.e1000 extension... [#] Downloading remote file https://raw.githubusercontent.com/pocopico/rp-ext/master/e1000/rpext-index.json to /home/tc/redpill-load/custom/extensions/_new_ext_index.tmp_json ##################################################################################################################################################### 100.0% [#] Extension pocopico.e1000 index is already up to date [#] Updating redpill-boot-wait extension... [#] Downloading remote file https://raw.githubusercontent.com/pocopico/rp-ext/master/redpill-boot-wait/rpext-index.json to /home/tc/redpill-load/custom/extensions/_new_ext_index.tmp_json ##################################################################################################################################################### 100.0% [#] Extension redpill-boot-wait index is already up to date [#] Updating redpill-misc extension... [#] Downloading remote file https://github.com/pocopico/redpill-load/raw/develop/redpill-misc/rpext-index.json to /home/tc/redpill-load/custom/extensions/_new_ext_index.tmp_json ##################################################################################################################################################### 100.0% [#] Extension redpill-misc index is already up to date [#] Updating redpill-misc extension... [OK] [#] Checking runtime for required tools... [OK] [#] Updating dva3221_42218 platforms extensions... [#] Downloading remote file https://raw.githubusercontent.com/pocopico/rp-ext/master/e1000/releases/dva3221_42218.json to /home/tc/redpill-load/custom/extensions/_ext_new_rcp.tmp_json ##################################################################################################################################################### 100.0% [#] Filling-in newly downloaded recipe for extension pocopico.e1000 platform dva3221_42218 [#] Downloading remote file https://raw.githubusercontent.com/pocopico/rp-ext/master/e1000/releases/e1000-4.4.180plus-denverton.tgz to /home/tc/redpill-load/custom/extensions/pocopico.e1000/dva3221_42218/e1000-4.4.180plus-denverton.tgz ##################################################################################################################################################### 100.0% [#] Verifying /home/tc/redpill-load/custom/extensions/pocopico.e1000/dva3221_42218/e1000-4.4.180plus-denverton.tgz file... [OK] [#] Unpacking files from /home/tc/redpill-load/custom/extensions/pocopico.e1000/dva3221_42218/e1000-4.4.180plus-denverton.tgz to /home/tc/redpill-load/custom/extensions/pocopico.e1000/dva3221_42218/... [OK] [#] Downloading remote file https://raw.githubusercontent.com/pocopico/rp-ext/master/e1000/src/check-e1000.sh to /home/tc/redpill-load/custom/extensions/pocopico.e1000/dva3221_42218/check-e1000.sh ##################################################################################################################################################### 100.0% [#] Verifying /home/tc/redpill-load/custom/extensions/pocopico.e1000/dva3221_42218/check-e1000.sh file... [OK] [#] Successfully processed recipe for extension pocopico.e1000 platform dva3221_42218 [#] Downloading remote file https://github.com/pocopico/rp-ext/raw/main/redpill-boot-wait/recipes/universal.json to /home/tc/redpill-load/custom/extensions/_ext_new_rcp.tmp_json ##################################################################################################################################################### 100.0% [#] Filling-in newly downloaded recipe for extension redpill-boot-wait platform dva3221_42218 [#] Downloading remote file https://github.com/pocopico/rp-ext/raw/main/redpill-boot-wait/src/boot-wait.sh to /home/tc/redpill-load/custom/extensions/redpill-boot-wait/dva3221_42218/boot-wait.sh ##################################################################################################################################################### 100.0% [#] Verifying /home/tc/redpill-load/custom/extensions/redpill-boot-wait/dva3221_42218/boot-wait.sh file... [OK] [#] Successfully processed recipe for extension redpill-boot-wait platform dva3221_42218 [#] Downloading remote file https://github.com/pocopico/redpill-load/raw/develop/redpill-misc/recipes/universal.json to /home/tc/redpill-load/custom/extensions/_ext_new_rcp.tmp_json ##################################################################################################################################################### 100.0% [#] Extension redpill-misc for dva3221_42218 platform is already up to date [#] Verifying /home/tc/redpill-load/custom/extensions/redpill-boot-wait/dva3221_42218/boot-wait.sh file... [OK] [#] Updating extensions... [OK] [#] PAT file /home/tc/redpill-load/cache/dva3221_42218.pat not found - downloading from https://global.download.synology.com/download/DSM/release/7.0.1/42218/DSM_DVA3221_42218.pat % Total % Received % Xferd Average Speed Time Time Time Current Dload Upload Total Spent Left Speed 100 347M 100 347M 0 0 44.6M 0 0:00:07 0:00:07 --:--:-- 45.2M [#] Verifying /home/tc/redpill-load/cache/dva3221_42218.pat file... [OK] [#] Unpacking /home/tc/redpill-load/cache/dva3221_42218.pat file to /home/tc/redpill-load/build/1671155955/pat-dva3221_42218-unpacked... [OK] [#] Verifying /home/tc/redpill-load/build/1671155955/pat-dva3221_42218-unpacked/zImage file... [OK] [#] Patching /home/tc/redpill-load/build/1671155955/pat-dva3221_42218-unpacked/zImage to /home/tc/redpill-load/build/1671155955/zImage-patched... [OK] [#] Verifying /home/tc/redpill-load/build/1671155955/pat-dva3221_42218-unpacked/rd.gz file... [OK] [#] Unpacking /home/tc/redpill-load/build/1671155955/pat-dva3221_42218-unpacked/rd.gz file to /home/tc/redpill-load/build/1671155955/rd-dva3221_42218-unpacked... [OK] [#] Apply patches to /home/tc/redpill-load/build/1671155955/rd-dva3221_42218-unpacked... [OK] [#] Patching config files in ramdisk... [OK] [#] Adding OS config patching... [OK] [#] Repacking ramdisk to /home/tc/redpill-load/build/1671155955/rd-patched-dva3221_42218.gz... [OK] [#] Bundling extensions... [#] Checking runtime for required tools... [OK] [#] Dumping dva3221_42218 platform extensions to /home/tc/redpill-load/build/1671155955/custom-initrd/exts... [OK] [#] Packing custom ramdisk layer to /home/tc/redpill-load/build/1671155955/custom.gz... [OK] [#] Generating GRUB config... [OK] [#] Creating loader image at loader.img... [ERR] [!] Failed to copy /home/tc/redpill-load/config/_common/bzImage to /home/tc/redpill-load/build/1671155955/img-mnt/part-2/bzImage /usr/local/bin/cp: cannot stat '/home/tc/redpill-load/config/_common/bzImage': No such file or directory *** Process will exit *** FAILED : Loader creation failed check the output for any errors tc@box:~$ ls LICENSE config/ custom_config_jun.json html/ redpill-lkm/ rploader.sh user_config.json README.md custom-module dtc modules.alias.3.json redpill-load/ serialnumbergen.sh check-redpill.sh custom_config.json global_config.json modules.alias.4.json rpext-index.json tools/ tc@box:~$

-

SurveillanceStation-x86_64-8.2.2-5766

petersnows replied to montagnic's topic in Програмное обеспечение

(Использование Google Translate) Можете ли вы загрузить файлы снова? Я имею ввиду 3,6Мб. Не весь пакет. Спасибо -

Thanks IG-88 I have tested before installing the DSM. I created a usb pen with: v1.04b DS918+ with: extra.lzma/extra2.lzma for loader 1.04b ds918+ DSM 6.2.3 v0.12.1 plug the usb pen and booted find.synology.com appears but I am not able to connect to it using SynologyAssitant plug the usb pen and booted find.synology.com appears but I am not able to connect to it using SynologyAssitant plug an "usb-Ethernet device" find.synology.com appears and I am able to connect using SynologyAssitant I don't even use the "usb-Ethernet device" to connect to it I repeated the above 2 steps 3 times, I would get the same result over and over. Connecting the "usb-Ethernet device" would make the "Ethernet Connection I219-V" to work and ask for an IP. I always used the same usb pen. The RJ45 was well connected, I could see the lights on the switch and on the "Ethernet Connection I219-V". One question, what is the usb vid/pid for ? When it's used ? After I install the the DSM, what does the synoboot (usb pen) do ? Does it changes and teaks the DSM installation everytime it boots ? If I want to duplicate the USB pen to replace the existing one. I just need to: recreate it like I did with the first one grub.cfg (vid/pid, depending on the usb pen brand, mac, serial) use the : rd.gz, zImage of the latest installed pat file Or there is any other file that I need to be aware ?

-

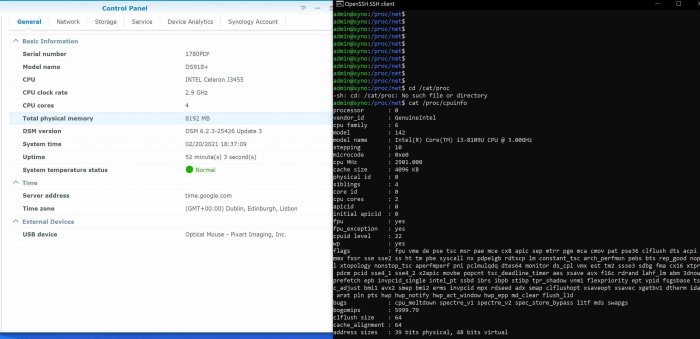

I was able to get SynologyAssistant to work, connecting an USB-Ethernet card. Just by connecting it, without changing anything else. I can now see both network cards (the usb one and the intelNUC one). Do you know why ? I then installed the DSM like usual. No I notice that the CPU in controlPanel (J3455) is not the one on the machine. ( i3-8109U CPU) Does this impacts the performance ? Here you have the lscpi (of the NUC8i3BEH after installed the DSM) I removed the usb-ethernet device, after installed the DSM admin@syno:/proc/net$ lspci -q 0000:00:00.0 Host bridge: Intel Corporation Device 3ecc (rev 08) 0000:00:02.0 VGA compatible controller: Intel Corporation CoffeeLake-U GT3e [Iris Plus Graphics 655] (rev 01) 0000:00:08.0 System peripheral: Intel Corporation Xeon E3-1200 v5/v6 / E3-1500 v5 / 6th/7th/8th Gen Core Processor Gaussian Mixture Model 0000:00:12.0 Signal processing controller: Intel Corporation Cannon Point-LP Thermal Controller (rev 30) 0000:00:14.0 USB controller: Intel Corporation Cannon Point-LP USB 3.1 xHCI Controller (rev 30) 0000:00:14.2 RAM memory: Intel Corporation Cannon Point-LP Shared SRAM (rev 30) 0000:00:16.0 Communication controller: Intel Corporation Cannon Point-LP MEI Controller #1 (rev 30) 0000:00:17.0 SATA controller: Intel Corporation Cannon Point-LP SATA Controller [AHCI Mode] (rev 30) 0000:00:1d.0 PCI bridge: Intel Corporation Cannon Point-LP PCI Express Root Port #9 (rev f0) 0000:00:1d.6 PCI bridge: Intel Corporation Cannon Point-LP PCI Express Root Port #15 (rev f0) 0000:00:1f.0 ISA bridge: Intel Corporation Cannon Point-LP LPC Controller (rev 30) 0000:00:1f.3 Audio device: Intel Corporation Cannon Point-LP High Definition Audio Controller (rev 30) 0000:00:1f.4 SMBus: Intel Corporation Cannon Point-LP SMBus Controller (rev 30) 0000:00:1f.5 Serial bus controller [0c80]: Intel Corporation Cannon Point-LP SPI Controller (rev 30) 0000:00:1f.6 Ethernet controller: Intel Corporation Ethernet Connection (6) I219-V (rev 30) 0000:02:00.0 Unassigned class [ff00]: Realtek Semiconductor Co., Ltd. RTS522A PCI Express Card Reader (rev 01) 0001:00:12.0 Non-VGA unclassified device: Intel Corporation Celeron N3350/Pentium N4200/Atom E3900 Series SATA AHCI Controller (rev ff) 0001:00:13.0 Non-VGA unclassified device: Intel Corporation Celeron N3350/Pentium N4200/Atom E3900 Series PCI Express Port A #1 (rev ff) 0001:00:14.0 Non-VGA unclassified device: Intel Corporation Celeron N3350/Pentium N4200/Atom E3900 Series PCI Express Port B #1 (rev ff) 0001:00:15.0 Non-VGA unclassified device: Intel Corporation Celeron N3350/Pentium N4200/Atom E3900 Series USB xHCI (rev ff) 0001:00:16.0 Non-VGA unclassified device: Intel Corporation Celeron N3350/Pentium N4200/Atom E3900 Series I2C Controller #1 (rev ff) 0001:00:18.0 Non-VGA unclassified device: Intel Corporation Celeron N3350/Pentium N4200/Atom E3900 Series HSUART Controller #1 (rev ff) 0001:00:19.2 Non-VGA unclassified device: Intel Corporation Celeron N3350/Pentium N4200/Atom E3900 Series SPI Controller #3 (rev ff) 0001:00:1f.1 Non-VGA unclassified device: Intel Corporation Celeron N3350/Pentium N4200/Atom E3900 Series SMBus Controller (rev ff) 0001:01:00.0 Non-VGA unclassified device: Marvell Technology Group Ltd. 88SE9215 PCIe 2.0 x1 4-port SATA 6 Gb/s Controller (rev ff) 0001:02:00.0 Non-VGA unclassified device: Intel Corporation I211 Gigabit Network Connection (rev ff) 0001:03:00.0 Non-VGA unclassified device: Intel Corporation I211 Gigabit Network Connection (rev ff) pcilib: Cannot write to /var/services/homes/admin/.pciids-cache: No such file or directory admin@syno:/proc/net$ lspci 0000:00:00.0 Class 0600: Device 8086:3ecc (rev 08) 0000:00:02.0 Class 0300: Device 8086:3ea5 (rev 01) 0000:00:08.0 Class 0880: Device 8086:1911 0000:00:12.0 Class 1180: Device 8086:9df9 (rev 30) 0000:00:14.0 Class 0c03: Device 8086:9ded (rev 30) 0000:00:14.2 Class 0500: Device 8086:9def (rev 30) 0000:00:16.0 Class 0780: Device 8086:9de0 (rev 30) 0000:00:17.0 Class 0106: Device 8086:9dd3 (rev 30) 0000:00:1d.0 Class 0604: Device 8086:9db0 (rev f0) 0000:00:1d.6 Class 0604: Device 8086:9db6 (rev f0) 0000:00:1f.0 Class 0601: Device 8086:9d84 (rev 30) 0000:00:1f.3 Class 0403: Device 8086:9dc8 (rev 30) 0000:00:1f.4 Class 0c05: Device 8086:9da3 (rev 30) 0000:00:1f.5 Class 0c80: Device 8086:9da4 (rev 30) 0000:00:1f.6 Class 0200: Device 8086:15be (rev 30) 0000:02:00.0 Class ff00: Device 10ec:522a (rev 01) 0001:00:12.0 Class 0000: Device 8086:5ae3 (rev ff) 0001:00:13.0 Class 0000: Device 8086:5ad8 (rev ff) 0001:00:14.0 Class 0000: Device 8086:5ad6 (rev ff) 0001:00:15.0 Class 0000: Device 8086:5aa8 (rev ff) 0001:00:16.0 Class 0000: Device 8086:5aac (rev ff) 0001:00:18.0 Class 0000: Device 8086:5abc (rev ff) 0001:00:19.2 Class 0000: Device 8086:5ac6 (rev ff) 0001:00:1f.1 Class 0000: Device 8086:5ad4 (rev ff) 0001:01:00.0 Class 0000: Device 1b4b:9215 (rev ff) 0001:02:00.0 Class 0000: Device 8086:1539 (rev ff) 0001:03:00.0 Class 0000: Device 8086:1539 (rev ff) admin@syno:/proc/net$

-

I am trying to to install xpenology on intel NUC NUC8i3BEH (Intel® Core™ i3-8109U, Intel® Ethernet Connection I219-V) I have tried : v1.04b DS918+ v1.04b DS918+ with: extra.lzma/extra2.lzma for loader 1.04b ds918+ DSM 6.2.3 v0.13.3 v1.04b DS918+ with: extra.lzma/extra2.lzma for loader 1.04b ds918+ DSM 6.2.3 v0.12.1 I always get find.synology.com. But I am not able to connect to it using SynologyAssitant I also don't see any new entry in the DHCP server. Can anyone help me ? I think the driver for this device is e1000e, but it's not working.

-

Hello guys, I just updated my synology setup to 6.2.2-24922 v1.03b - DS3615xs I boot using: "DS3615xs 6.2 Baremetal with Jun's Mod v1.03b" But I see this line (see serial.out ) patching file etc/synoinfo.conf Hunk #1 FAILED at 291. 1 out of 1 hunk FAILED -- saving rejects to file etc/synoinfo.conf.rej Is there a way to fix this ? Why do I see it ? I am attaching: /etc/synoinfo.conf /etc.defaults/synoinfo.conf jun.patch (that everyone has it) Thanks My Setup Info: - DSM version prior update: DSM 6.1.7-15284 update 3 - DSM after update : 6.2.2-24922 - Loader version and model: JUN'S LOADER v1.03b - DS3615xs - Using custom extra.lzma: NO - Installation type: ESXi 6.5 (HP MicroServer Gen8 with Xeon CPU E31260L 1265v2) (4 virtual SATA HardDrives: 50Mb 50Gb 1.7Tb 2.7Tb) - Additional comments: It failed in the beginning , as all services, including networking, would shutdown a few seconds after logging in to DSM (SYNO.Core.Desktop.Initdata_1_get would call "usr/syno/bin/synopkg onoffall stop"). Saw other posts mentioning an old .xpenoboot folder in / as the cause, so I deleted (cd \;sudo rm -r .xpenoboot) that and upgrade worked fine. jun.patch serial.out synoinfo.conf.etc synoinfo.conf.etc.defaults

-

- Outcome of the installation/update: SUCCESSFUL - DSM version prior update: DSM 6.1.7-15284 update 3 - Loader version and model: JUN'S LOADER v1.03b - DS3615xs - Using custom extra.lzma: NO - Installation type: VM - ESXi 6.5 (HP MicroServer Gen8 with Xeon CPU E31260L 1265v2) (4 virtual SATA HardDrives: 50Mb 50Gb 1.7Tb 2.7Tb) - Additional comments: It failed in the beginning , as all services, including networking, would shutdown a few seconds after logging in to DSM (SYNO.Core.Desktop.Initdata_1_get would call "usr/syno/bin/synopkg onoffall stop"). Saw other posts mentioning an old .xpenoboot folder in / as the cause, so I deleted (cd \;sudo rm -r .xpenoboot) that and upgrade worked fine.

-

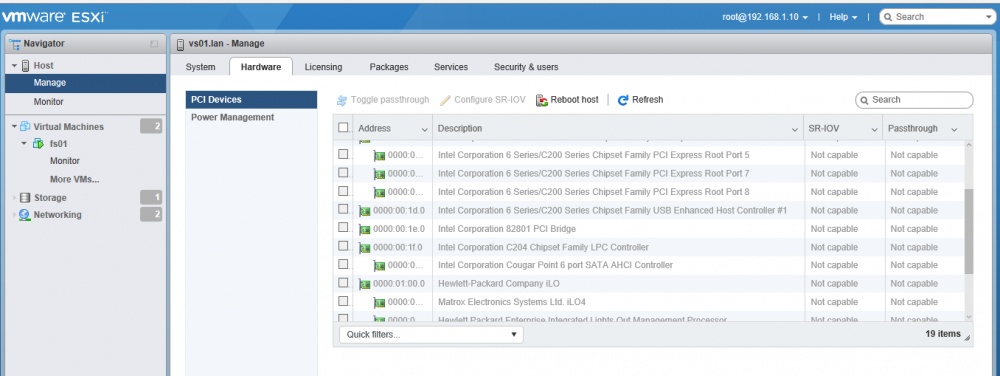

HP Gen 8 esxi, passhtrough controller, no disks visble?

petersnows replied to NoFate's topic in DSM 6.x

Hi pigr8, "the cougarpoint is passed through to the xpenology vm" how did you passthrough the pci cougarpoint ? My ESXI 6.5 does not allow that. Can you send photos of your setup ? Pedro -

So far I was able to resolve this by doing the following: - syno_poweroff_task -d - ssh again - mdadm --detail /dev/md3 GET the UUID, mine is : bbe73c5c:694d1437:20f54790:be2b9bfb root@fs02:~# mdadm --detail /dev/md3 /dev/md3: Version : 1.2 Creation Time : Sun Apr 16 19:58:33 2017 Raid Level : raid1 Array Size : 2925444544 (2789.92 GiB 2995.66 GB) Used Dev Size : 2925444544 (2789.92 GiB 2995.66 GB) Raid Devices : 1 Total Devices : 1 Persistence : Superblock is persistent Update Time : Wed Jun 28 08:09:20 2017 State : clean Active Devices : 1 Working Devices : 1 Failed Devices : 0 Spare Devices : 0 Name : fs03:3 UUID : bbe73c5c:694d1437:20f54790:be2b9bfb Events : 5 Number Major Minor RaidDevice State 0 8 35 0 active sync /dev/sdc3 root@fs02:~# - mdadm --stop /dev/md3 - btrfs check --repair /dev/md3 - mdadm -Cf /dev/md3 -e1.2 -n1 -l1 /dev/sdc3 -ubbe73c5c:694d1437:20f54790:be2b9bfb - reboot Commands to check the status: cat /proc/mdstat mdadm --detail /dev/md3 mdadm --examine /dev/sdc3 So far this issue happened twice. It has been ok in the past 2 days. Let's see how it goes.

-

One or more raid groups/ssd caches are crashed. I have a volume 3 that shows up as crashed. It had been working fine for more than a week I can accees it though (all the files are fine). I would like to know how do I change the HD/volume/raidgroup status. I actually don't know why it shows as crashed. hp microserver gen8 ESXI 6.5 3TB Hardrive, RDM, (Raw Device Mapping) 6.1.15047 Jun 1.02b ** Mdstat root@fs02:/etc/space# cat /proc/mdstat Personalities : [linear] [raid0] [raid1] [raid10] [raid6] [raid5] [raid4] [raidF1] md2 : active raid1 sdb5[0] 47695808 blocks super 1.2 [1/1] [U] md3 : active raid1 sdc3[0](E) 2925444544 blocks super 1.2 [1/1] [E] md1 : active raid1 sdc2[1] sdb2[0] 2097088 blocks [12/2] [UU__________] md0 : active raid1 sdc1[1] sdb1[0] 2490176 blocks [12/2] [UU__________] unused devices: <none> root@fs02:/etc/space# ** mdadm root@fs02:/etc/space# mdadm --detail /dev/md3 /dev/md3: Version : 1.2 Creation Time : Sun Apr 16 19:58:33 2017 Raid Level : raid1 Array Size : 2925444544 (2789.92 GiB 2995.66 GB) Used Dev Size : 2925444544 (2789.92 GiB 2995.66 GB) Raid Devices : 1 Total Devices : 1 Persistence : Superblock is persistent Update Time : Tue Jun 27 23:15:13 2017 State : clean Active Devices : 1 Working Devices : 1 Failed Devices : 0 Spare Devices : 0 Name : fs03:3 UUID : bbe73c5c:694d1437:20f54790:be2b9bfb Events : 5 Number Major Minor RaidDevice State 0 8 35 0 active sync /dev/sdc3 root@fs02:/etc/space# ** mount root@fs02:/etc/space# mount /dev/md0 on / type ext4 (rw,relatime,journal_checksum,barrier,data=ordered) none on /dev type devtmpfs (rw,nosuid,noexec,relatime,size=1022480k,nr_inodes=255620,mode=755) none on /dev/pts type devpts (rw,nosuid,noexec,relatime,gid=5,mode=620,ptmxmode=000) none on /proc type proc (rw,nosuid,nodev,noexec,relatime) none on /sys type sysfs (rw,nosuid,nodev,noexec,relatime) /tmp on /tmp type tmpfs (rw,relatime) /run on /run type tmpfs (rw,nosuid,nodev,relatime,mode=755) /dev/shm on /dev/shm type tmpfs (rw,nosuid,nodev,relatime) none on /sys/fs/cgroup type tmpfs (rw,relatime,size=4k,mode=755) cgmfs on /run/cgmanager/fs type tmpfs (rw,relatime,size=100k,mode=755) cgroup on /sys/fs/cgroup/cpuset type cgroup (rw,relatime,cpuset,release_agent=/run/cgmanager/agents/cgm-release-agent.cpuset,clone_children) cgroup on /sys/fs/cgroup/cpu type cgroup (rw,relatime,cpu,release_agent=/run/cgmanager/agents/cgm-release-agent.cpu) cgroup on /sys/fs/cgroup/cpuacct type cgroup (rw,relatime,cpuacct,release_agent=/run/cgmanager/agents/cgm-release-agent.cpuacct) cgroup on /sys/fs/cgroup/memory type cgroup (rw,relatime,memory,release_agent=/run/cgmanager/agents/cgm-release-agent.memory) cgroup on /sys/fs/cgroup/devices type cgroup (rw,relatime,devices,release_agent=/run/cgmanager/agents/cgm-release-agent.devices) cgroup on /sys/fs/cgroup/freezer type cgroup (rw,relatime,freezer,release_agent=/run/cgmanager/agents/cgm-release-agent.freezer) cgroup on /sys/fs/cgroup/blkio type cgroup (rw,relatime,blkio,release_agent=/run/cgmanager/agents/cgm-release-agent.blkio) none on /proc/bus/usb type devtmpfs (rw,nosuid,noexec,relatime,size=1022480k,nr_inodes=255620,mode=755) none on /sys/kernel/debug type debugfs (rw,relatime) securityfs on /sys/kernel/security type securityfs (rw,relatime) /dev/mapper/vol1-origin on /volume1 type ext4 (rw,relatime,journal_checksum,synoacl,data=writeback,jqfmt=vfsv0,usrjquota=aquota.user,grpjquota=aquota.group) /dev/md3 on /volume3 type btrfs (rw,relatime,synoacl,nospace_cache,flushoncommit_threshold=1000,metadata_ratio=50) none on /config type configfs (rw,relatime) /dev/mapper/vol1-origin on /opt type ext4 (rw,relatime,journal_checksum,synoacl,data=writeback,jqfmt=vfsv0,usrjquota=aquota.user,grpjquota=aquota.group) none on /proc/fs/nfsd type nfsd (rw,relatime) /dev/mapper/vol1-origin on /volume1/@docker/aufs type ext4 (rw,relatime,journal_checksum,synoacl,data=writeback,jqfmt=vfsv0,usrjquota=aquota.user,grpjquota=aquota.group) root@fs02:/etc/space# ** console logs [91206.299324] ata3.00: exception Emask 0x0 SAct 0x0 SErr 0x0 action 0x0 [91206.301555] ata3.00: irq_stat 0x40000000 [91206.303097] ata3.00: failed command: WRITE DMA [91206.304644] ata3.00: cmd ca/00:08:80:06:4c/00:00:00:00:00/e0 tag 28 dma 4096 out [91206.304644] res 41/02:00:00:00:00/00:00:00:00:00/00 Emask 0x1 (device error) [91206.309667] ata3.00: status: { DRDY ERR } [91206.699074] ata3.00: exception Emask 0x0 SAct 0x0 SErr 0x0 action 0x0 [91206.701293] ata3.00: irq_stat 0x40000000 [91206.702640] ata3.00: failed command: WRITE DMA [91206.704173] ata3.00: cmd ca/00:08:80:06:4c/00:00:00:00:00/e0 tag 29 dma 4096 out [91206.704173] res 41/02:00:00:00:00/00:00:00:00:00/00 Emask 0x1 (device error) [91206.709172] ata3.00: status: { DRDY ERR }

-

Trial of Nanoboot (4493) - 5.0.3.1

petersnows replied to vtrchan's topic in DSM 5.2 and earlier (Legacy)

Thanks that worked for me ! N54L + Proxmox 3.4 + XPEnoboot_DS3615xs_5.1-5022.3.img -

Trial of Nanoboot (4493) - 5.0.3.1

petersnows replied to vtrchan's topic in DSM 5.2 and earlier (Legacy)

Thanks that worked for me ! N54L + Proxmox3.4 + XPEnoboot_DS3615xs_5.1-5022.3.img