Kanedo

Member-

Posts

89 -

Joined

-

Last visited

-

Days Won

4

Everything posted by Kanedo

-

For VMware ESXi, I've been using "Other Linux x64" without issues. Would love to hear if there's a better one to pick. The only order that matters is that you configure VMware to boot from the loader SATA drive.

-

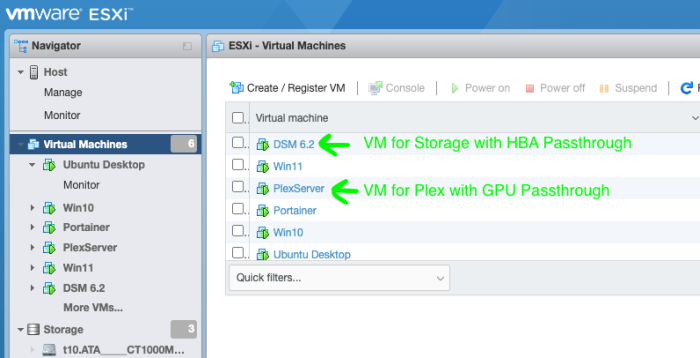

I see. I do use RAID6 on my production server which is still on DSM 6.2. I'm only experimenting with DSM 7 on a separate box with a single disk at the moment. I'll have to add more disks for further testing. So yea, I see your point for those that wants to use HBA and iGPU on bare-metal installations is problematic. @cabldevil Is running separate virtual machines not an option for you? I assume you need transcoding for Plex right? This is how I run mine

-

So personally, I run DSM in a separate VM than Plex VM I passthrough the HBA to the DSM VM. I passthrough the GPU to the Plex VM (Ubuntu) This actually affords me more flexibility as I can use a suitable Synology model such as DS3622xs for just storage, and I can use Nvidia GPU for transcoding. I've also gotten iGPU for transcoding working this way as well by passing the iGPU into the Plex VM.

-

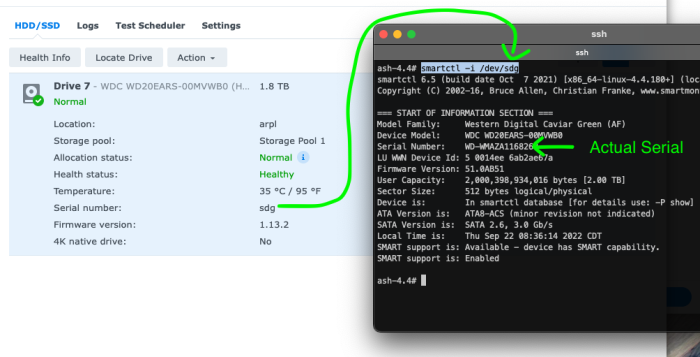

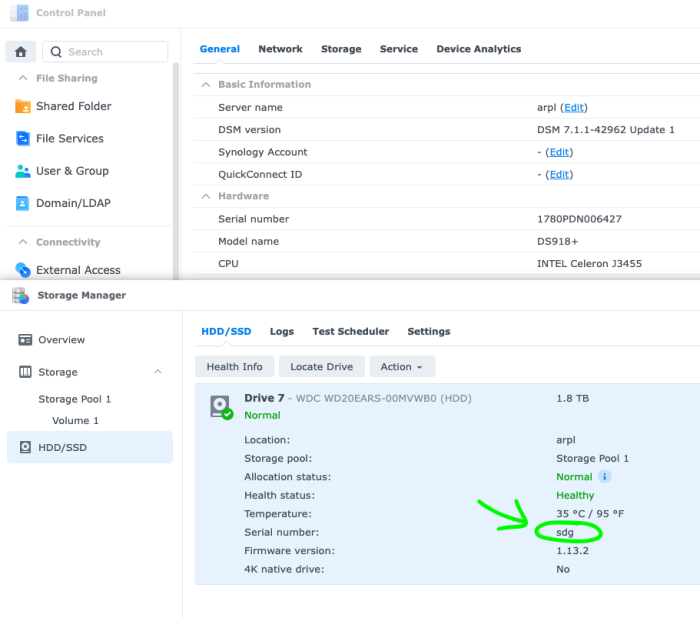

There is a workaround to that problem if you're comfortable SSH into the machine. Run smartctl -i /dev/sdX and it'll show you the real serial

-

-

I've no issues getting my HBA working with DS918+ with the following Host type: Bare-metal HBA: DELL Perc H310 cross-flashed to LSI 9211-8i with IT firmware 20.00.07.00 Drive Connection: HBA connected to SATA drive via breakout cable Redpill Loader: arpl v0.4-alpha9 DSM: 7.1.1-42962 Update 1 With the above setup, I was able to get my HBA working with DS918+, DS3617xs, and DS3622xs

-

TinyCore RedPill loader (TCRP) - Development release 0.9

Kanedo replied to pocopico's topic in Developer Discussion Room

No need. I re-sized the 3rd partition of the img to be just a little bit smaller and got it to fit on a 1GB USB stick. What would make the most sense is for the 3rd partition of the img to be as small as possible and resize to fit the USB stick on first boot. This is merely a suggestion. -

TinyCore RedPill loader (TCRP) - Development release 0.9

Kanedo replied to pocopico's topic in Developer Discussion Room

Yea, I'm guessing it's a diff between how drive makers consider 1GB (1000^3) vs binary 1GiB (1024^3). It'd be even better if the image could be as small as possible, and then do the expansion(resize) upon first boot. -

@fbelavenuto. I'm running bare-metal with v0.4-alpha6 and it is not working with LSI 9211 HBA using DS3615, DS3617, or DS3622. It works with TinyCore-Redpill just fine, which loads mpt2sas. Any chance this can be supported?

-

TinyCore RedPill loader (TCRP) - Development release 0.9

Kanedo replied to pocopico's topic in Developer Discussion Room

Hi @pocopico Could you make the image a tad smaller? I have a 1000MB USB stick and your 1GB image doesn't fit it. Thanks. -

RedPill - the new loader for 6.2.4 - Discussion

Kanedo replied to ThorGroup's topic in Developer Discussion Room

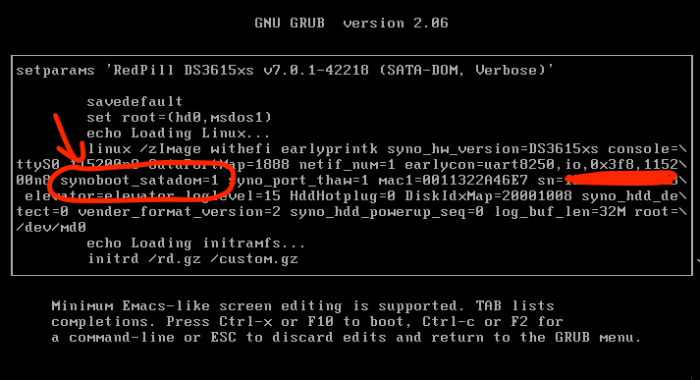

If you use tinycore-redpill. Boot as IDE, then after you finish building the bootloader via ./rploader.sh build ... then, you can switch back to SATA. Then for SATA to work, here's how I got it working: Use SATA DOM boot arg For this to work, you must choose a model that supports SATA DOM, such as DS3615xs. All you have to do is add the following boot arg: synoboot_satadom=1 You don't even need VID or PID. -

For those of you with a Supermicro chassis...

Kanedo replied to eptesicus's topic in Hardware Modding

Let us know if it works in a startup script. -

For those of you with a Supermicro chassis...

Kanedo replied to eptesicus's topic in Hardware Modding

No problem. Glad it worked. You'll have to run this after every reboot though. Or you can put it in some startup script. -

For those of you with a Supermicro chassis...

Kanedo replied to eptesicus's topic in Hardware Modding

Try this and see if it works. sudo find /sys/devices/ -type f -name locate -exec sh -c 'echo 0 > "$1"' -- {} \; -

It's because your enumeration of your disks are not contiguous. Notice that /dev/sde and /dev/sdf is missing in your list When you set internalportcfg to 0xfff, this means you're assigning /dev/sda - /dev/sdl as disks 1-12. Because you have a gap in your enumeration (missing /dev/sde and /devsdf) it pushes the remaining 8 disks from /dev/sdg - /dev/sdn. Since /dev/sdm and /dev/sdn falls outside of /dev/sda - /dev/sdl, two disks won't show up. Easiest way to solve your problem is to simply increase the number of enumerated slots maxdisks=16 internalportcfg=0xffff usbportcfg=0x1f0000 esataportcfg=0x0 With this config, you're allocating disk 1-16 (/dev/sda - /dev/sdp) for internal use.

-

The proper way to move synoboot to a much higher enumeration is to change DiskIdxMap in grub.cfg On jun's 1.03b, the default is DiskIdxMap=0C 0C = Disk 13 Change it to something much higher DiskIdxMap=1F 1F = Disk 32 For more info: https://github.com/evolver56k/xpenology/blob/master/synoconfigs/Kconfig.devices#L245

-

This is a repost of an archive I posted in 2015. This method works for DSM 6.2 using Jun's loader 1.03b for me. 1) Enable SSH and ssh into your DiskStation 2) Become root ( sudo -i ) 3) Make a mount point ( mkdir -p /tmp/mountMe ) 4) cd into /dev ( cd /dev ) 5) mount synoboot1 to your mount point ( mount -t vfat synoboot1 /tmp/mountMe ) 6) Profit! admin@DiskStation:~# sudo -i root@DiskStation:~# mkdir -p /tmp/mountMe root@DiskStation:~# cd /dev root@DiskStation:/dev# mount -t vfat synoboot1 /tmp/mountMe root@DiskStation:/dev# ls -l /tmp/mountMe total 2554 -rwxr-xr-x 1 root root 2605216 Aug 1 10:40 bzImage drwxr-xr-x 3 root root 2048 Aug 1 10:40 EFI drwxr-xr-x 6 root root 2048 Aug 1 10:40 grub -rwxr-xr-x 1 root root 103 Jul 3 15:09 GRUB_VER -rwxr-xr-x 1 root root 225 Aug 1 10:40 info.txt root@DiskStation:/dev#

-

Yes, I have that exact card and it works with the current xpenology boot image.

-

Terrific. Please report back your findings.

-

You can choose the default adapter in the network management section of DSM. Try to set the default adapter to your 1Gb NIC.

-

Totally agree! All depends on the SATA controller chips used. There are a few Marvell chips that don't play too well with the latest XPE builds. Very curious which chips these come with. Official Product page http://www.hcipctech.com/Home/ProductCo ... &english=2 I believe the 19NVR3 uses the quad-core J1900. OP linked to 18NVR3, which is the dual-core J1800 model. At the price of over $150+shipping for the J1900 model, I'm not sure if this really is an ideal solution. Neither the J1800 or J1900 is particularly powerful processor. I think you can get more for your money elsewhere. By the time you max out the 13 SATA ports on your server, you might be concerned with faster processor, ECC memory, and possibly 10Gb Network. However, if all you want is max number of SATA ports with low power consumption, this does seem like an option. Although I would say XPE may not be the most ideal OS if you're going after low power. Something like UnRAID would typically use less power, since it doesn't spin up all the drives.

-

neuro1, There are two ways you can expose Intel X520-DA2 to your XPE VM in ESXi. 1) IOMMU (VT-d). Basically this is PCIe passthrough if your CPU and Motherboard supports it. This will allow you to assign your X520 for exclusive use with the XPE VM. XPE has built-in drivers for X520 so it will see it as a physical card. Downside to this approach is that only your XPE VM has access to 10Gb outside the host. 2) Assign your X520 to a vSwith. Then assign one of your XPE VM's virtual Network Adapter to use VMXNET3 as adapter type assigned to your 10Gb vSwitch. This is the method I employ so multiple VMs can all talk out over the 10Gb adapter.

-

Basically, if you want to load balance for multiple clients, multiple 1GbE link-aggregation/bond is a perfectly acceptable solution. However, if your goal is achieve higher than 1Gb to a single client, I'm afraid the only practical and software agnostic solution is with a faster interface such as 10GbE.

-

I have this exact card. It worked with Nanoboot 5.1 via a kernel option. However, I cannot get it to work with XPEnoboot 5.2 at all. You'll be easier off just forking up the cash for a LSI SAS2 card.

-

I have the same card. Apparently Xpenoboot broke support for some Marvell cards. It use to work with Nanoboot 5.1. So basically, I can't use those cards either until it's fixed with a future version of the bootloader